Something remarkable is happening in the world of artificial intelligence. The race to build the best AI model in 2026 has shifted into an entirely new gear, and this week, a forgotten checkbox in Anthropic's CMS accidentally exposed what may be the most powerful AI model ever built. Whether you're comparing Claude vs ChatGPT, tracking AI agents, or worried about AI job displacement, here's everything you need to know right now.

GPT-5.4: OpenAI Raises the Bar (Again)

OpenAI launched GPT-5.4 earlier this month, billing it as its "most capable and efficient frontier model for professional work." For anyone in the Claude vs GPT-5 debate, this release matters. The new model ships in three versions: standard, a reasoning-focused "Thinking" variant, and a performance-optimized "Pro" tier. With a context window of up to 1 million tokens, GPT-5.4 can process enormous volumes of information in a single session.

OpenAI claims GPT-5.4 is 33% less likely to make factual errors than its predecessor, and it set new benchmark records for law and finance professionals. Its GDPval score of 83%, meaning it matches or exceeds industry professionals in 83% of tasks across 44 occupations, is the most impressive real-world capability benchmark published by any AI lab in 2026. The pace of iteration is remarkable: GPT-5, 5.2, 5.3, and 5.4 have all shipped within roughly eight months.

GPT-5.4 also introduces Computer Use, letting the AI move your mouse, click buttons, and finish tasks in Excel or your browser autonomously. It scored 75% on the OSWorld benchmark for real-world computer use. On the API side, the new Tool Search system is a direct bet on AI agents, letting models look up tools dynamically rather than loading every definition upfront.

But when choosing the best AI model in 2026, GPT-5.4 is no longer the only headline.

Claude vs ChatGPT 2026: Why Claude Is Catching Up Fast

For anyone comparing ChatGPT vs Claude in 2026, the numbers tell a striking story. According to an analysis of credit card data from approximately 28 million U.S. consumers, Anthropic's Claude has more than doubled its paid subscriber base this year, with record growth between January and February.

What sparked the surge? Two things: clever Super Bowl ads that mocked ChatGPT's decision to show ads to users (and promised Claude never would), and a very public, headline-grabbing feud with the U.S. Department of Defense. Anthropic's CEO Dario Amodei publicly refused to allow Claude to be used for lethal autonomous military operations or mass surveillance. OpenAI, by contrast, signed a DoD deal and saw an immediate spike in uninstalls. In the ChatGPT vs Claude vs Gemini competition, brand trust has now become as important as benchmark scores.

Claude Code, Claude Cowork, and the new Computer Use feature, which allows Claude to independently navigate a computer, have also driven a wave of new subscriptions among developers and power users. Claude Sonnet 4.6 recently reached 72.5% on the OSWorld benchmark, approaching functional parity with human performance for computer use tasks.

For businesses evaluating which AI to adopt, the choice increasingly comes down to values alignment as much as raw capability. If your organization needs an AI partner that refuses military contracts and won't monetize your conversations with ads, Claude is the clear pick. If you need the broadest ecosystem and deepest third-party integrations, ChatGPT still leads.

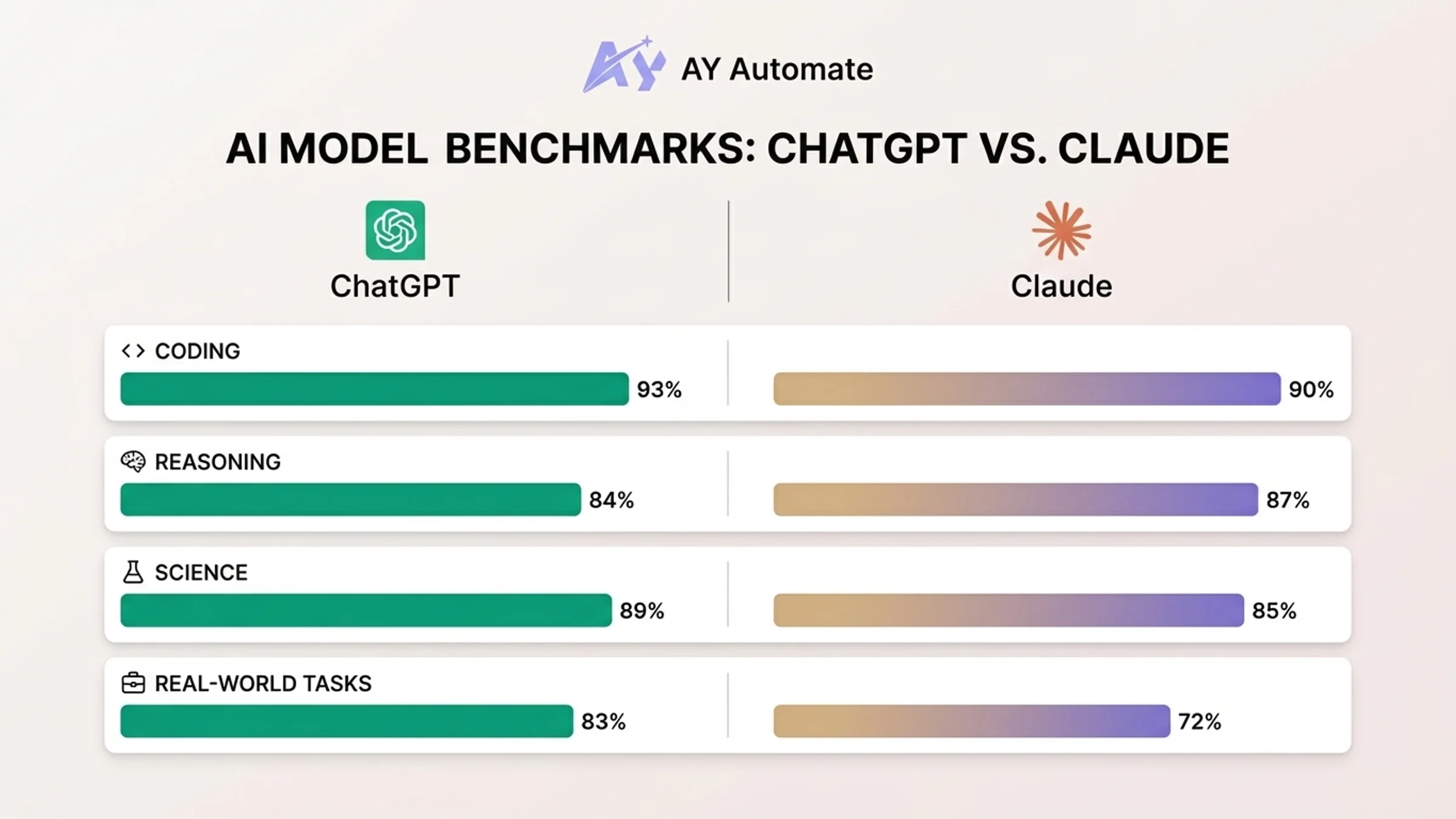

Claude vs GPT-5.4: Head-to-Head Benchmarks

Before diving into the Mythos leak, here's where the two flagship models stand today. This comparison covers Claude Opus 4.6 against GPT-5.4 across every dimension that matters for professional use.

| Category | Claude Opus 4.6 | GPT-5.4 | Winner |

|---|---|---|---|

| Coding (HumanEval) | 90.4% | 93.1% | GPT-5.4 |

| Coding (SWE-bench Verified) | 80.8% | 77.2% | Claude |

| Coding (SWE-bench Pro, hard tasks) | ~45.9% | 57.7% | GPT-5.4 |

| Reasoning (GPQA Diamond) | 87.4% | 83.9% | Claude |

| Science (MMLU-Pro) | 85.1% | 88.5% | GPT-5.4 |

| Real-world job tasks (GDPval) | Not published | 83% | GPT-5.4 |

| Computer use (OSWorld) | 72.5% (Sonnet 4.6) | 75% | GPT-5.4 |

| Context window (standard) | 200K tokens | 128K tokens | Claude |

| Context window (max) | 1M tokens (API) | 1M tokens | Tie |

| Native image generation | No | Yes (DALL-E) | GPT-5.4 |

| Video generation | No | Yes (Sora) | GPT-5.4 |

Pricing comparison (API):

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Consumer subscription |

|---|---|---|---|

| Claude Opus 4.6 | $5.00 | $25.00 | $20/month (Pro) / $200/month (Max) |

| Claude Sonnet 4.6 | $3.00 | $15.00 | Included in Pro |

| GPT-5.4 | $2.50 | $15.00 | $20/month (Plus) / $200/month (Pro) |

The takeaway: Claude Opus 4.6 leads in standard coding benchmarks and PhD-level reasoning. GPT-5.4 wins on hard coding problems, real-world professional tasks, and cost efficiency. For most developers, GPT-5.4 is the better default at a fraction of the API cost. For complex, multi-file software engineering, Claude Opus 4.6 remains the model to beat.

Claude's unique Agent Teams feature lets you split a task across multiple Claude agents (one handling backend, another frontend, another running tests), a capability GPT-5.4 doesn't match yet.

The Claude Mythos Leak: Anthropic's Most Powerful AI Model Ever

Here's the story dominating AI news this week: a single misconfigured CMS setting just exposed Anthropic's next flagship model, and what it revealed is extraordinary.

On March 26, 2026, security researchers discovered that a default "public" setting in Anthropic's content management system had accidentally made nearly 3,000 internal unpublished assets searchable online. Among them: a draft blog post describing Claude Mythos, also referred to internally by its product name "Claude Capybara."

What is Claude Mythos / Claude Capybara?

The leaked draft describes Mythos as a brand-new model tier that sits entirely above Anthropic's current flagship Opus line, making it the most powerful AI model Anthropic has ever built. The current model lineup goes Haiku, Sonnet, Opus. Capybara would sit above all three as a new, fourth tier.

Anthropic confirmed the model exists, stating: "We're developing a general purpose model with meaningful advances in reasoning, coding, and cybersecurity... We consider this model a step change and the most capable we've built to date."

What the benchmarks show

Compared to Claude Opus 4.6, Capybara gets "dramatically higher" scores on software coding, academic reasoning, and cybersecurity benchmarks. The leaked documents describe Claude Mythos as "currently far ahead of any other AI model in cyber capabilities."

| Capability | Claude Opus 4.6 | Claude Mythos (leaked) |

|---|---|---|

| Coding benchmarks | Current flagship | "Dramatically higher" |

| Academic reasoning | 91.3% GPQA Diamond | Exceeds Opus |

| Cybersecurity | Strong | "Far ahead of any other AI model" |

| Long-horizon agentic tasks | Available | Record scores reported |

| Model tier | Top tier (Opus) | New tier above Opus |

| General availability | Available now | Restricted early access only |

The cybersecurity concern

The most alarming detail? The leaked documents warn that Mythos "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders." Because of these cybersecurity risks, Anthropic is restricting early access to cyber defense organizations only, giving them time to harden their systems before broader release. The draft also notes that the model is expensive to run and not yet ready for general release.

Cybersecurity stocks dropped following the leak, reflecting market anxiety about a model that could fundamentally shift the offense-defense balance in cyber warfare.

For anyone asking which is the best AI model in 2026, Claude Mythos may soon reset the entire leaderboard.

The AI Skills Gap: Why Power Users Are Pulling Ahead

Beyond the Claude vs ChatGPT debate, perhaps the most quietly important AI story right now is the AI skills gap, and it has significant implications for workers everywhere.

Anthropic's fifth economic impact report, released this week, found that while AI has not yet caused widespread job displacement, early adopters are pulling far ahead. Power users deploy Claude as a "thought partner" for complex, iterative work, while newer users rely on it only for simple, one-off tasks. This growing divide is creating measurable workplace advantages for experienced AI users.

The theory vs. practice gap

The report reveals a striking disconnect. For computer and math workers, large language models are theoretically capable of handling 94% of their tasks, yet Claude currently covers only 33% in observed professional use. The gap between theoretical and observed exposure is consistently 50 to 65 percentage points across occupational categories. This means the real-world impact of AI depends almost entirely on how deeply you learn to use it.

Peter McCrory, Anthropic's head of economics, noted: "This points in the direction of AI being a skills-biased technology. It might potentially reinforce differences in outcomes among those who have higher skills at getting value out of these tools."

Who's at risk?

Anthropic's CEO Dario Amodei has predicted AI could eliminate up to half of all entry-level white-collar jobs, pushing unemployment to 20% within five years. The gap isn't evenly distributed: Claude is used most intensely in high-income countries and high-density knowledge-worker cities, meaning AI may amplify existing economic inequalities rather than flatten them.

| AI skills gap dimension | Current state |

|---|---|

| Theoretical task coverage (computer/math workers) | 94% of tasks |

| Observed actual coverage | 33% of tasks |

| Theory-practice gap | 50-65 percentage points |

| Power user advantage | Measurable productivity gains |

| Geographic concentration | High-income countries, knowledge-worker cities |

| Projected job impact (worst case) | Up to 50% of entry-level white-collar jobs |

For organizations evaluating AI strategy consulting, the message is clear: the companies that invest in upskilling their teams now will compound their advantage. Those that wait risk falling behind permanently. One way to close the gap quickly is through AI engineer placement, bringing in pre-vetted engineers who already have deep experience with these tools and can accelerate your team's adoption.

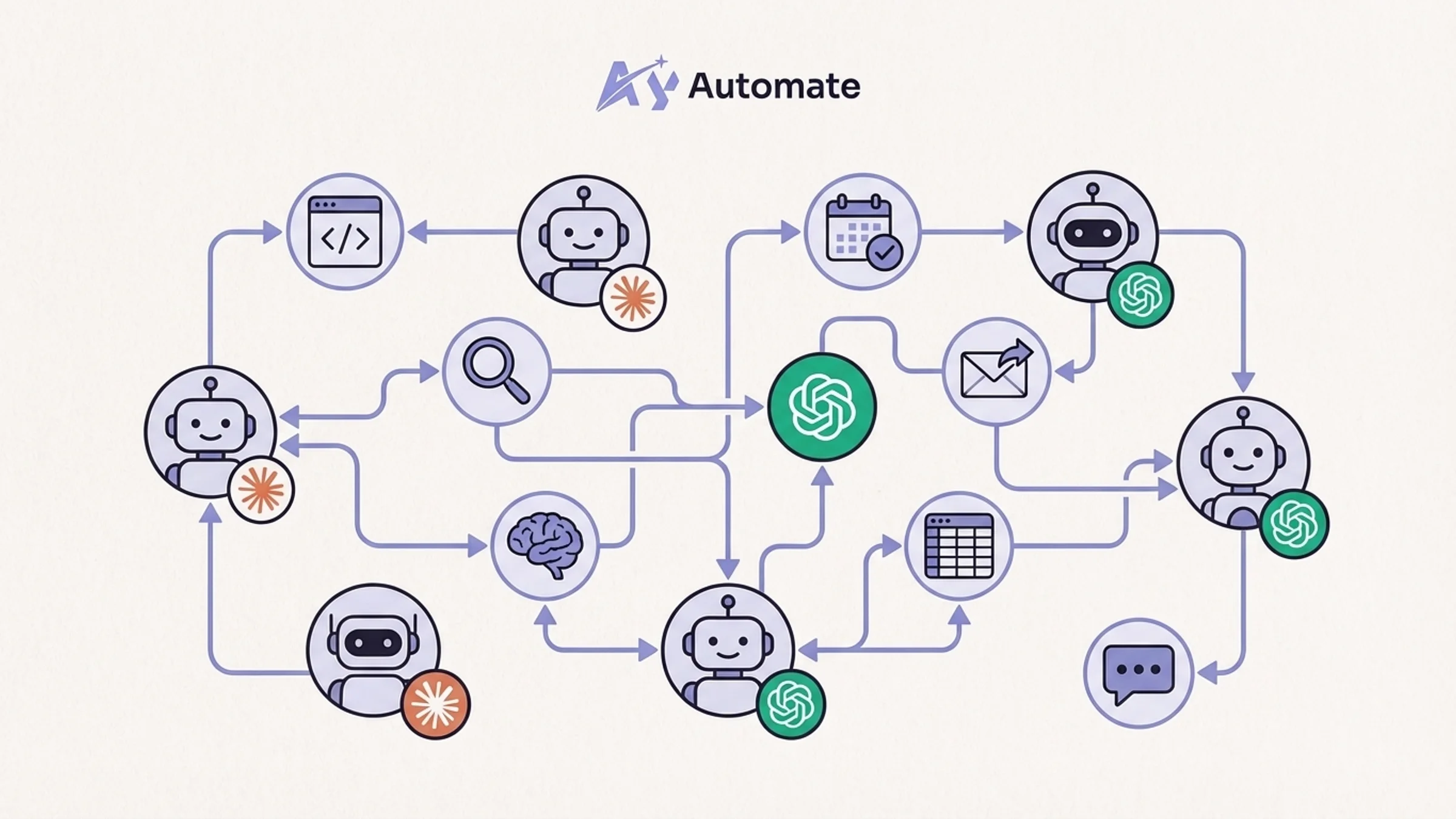

AI Agents in 2026: The Next Frontier

The single hottest-trending AI search term right now, according to Google Trends, is AI agents. And the market data backs up the hype. The global AI agents market reached approximately $7.8 billion in 2025 and is projected to exceed $10.9 billion in 2026, growing at a compound annual growth rate of 40.5% toward $139 billion by 2034.

Every major lab is racing to build autonomous systems that can plan, decide, and execute multi-step tasks on their own: booking travel, writing code, managing customer service, filing reports, all without needing a human at every step. Approximately 72% of Global 2000 companies now operate AI agent systems beyond experimental testing phases, and 86% of enterprise respondents said their AI budget will increase in 2026.

Where each platform stands on agents

OpenAI's new Tool Search system in GPT-5.4 lets models look up tools dynamically rather than loading every definition upfront. Anthropic's Computer Use feature, now available in Claude, lets the model autonomously navigate a computer. Claude's Agent Teams splits complex work across multiple specialized agents. And with Claude Mythos reportedly scoring records on "long-horizon" agentic tasks involving complex deliverables like financial models and legal analysis, the agent race is accelerating fast.

| AI agent capability | Claude | ChatGPT (GPT-5.4) | Gemini |

|---|---|---|---|

| Computer use | Yes (72.5% OSWorld) | Yes (75% OSWorld) | Limited |

| Multi-agent orchestration | Agent Teams | Not yet | Not yet |

| Dynamic tool discovery | Yes | Tool Search | Yes |

| Long-horizon agentic tasks | Strong (Mythos: record scores) | Strong | Moderate |

| Enterprise adoption rate | Growing fast | Largest user base | Google ecosystem |

For businesses, AI agents represent the next major productivity wave. Companies that implement AI agents report revenue increases of 3% to 15% and a 10% to 20% boost in sales ROI. For workers, they represent the next wave of automation risk. Either way, they're arriving faster than most people expect.

For enterprises that need autonomous agents with built-in security and compliance guardrails, OpenClaw and NemoClaw enterprise setup provides a framework for deploying agents that operate safely within corporate infrastructure.

If you're exploring how to build custom AI agents for your business workflows, the technology is mature enough to deploy today, not in some distant future.

Claude vs ChatGPT vs Gemini: Who Wins in 2026?

For the head-to-head comparison: in 2026, the answer is "it depends on your use case," but the competitive landscape has shifted meaningfully.

| Platform | Best for | Biggest weakness | Trust factor |

|---|---|---|---|

| ChatGPT (GPT-5.4) | Broadest integrations, professional research, law, finance | DoD partnership backlash, ad monetization concerns | Mixed |

| Claude (Opus 4.6) | Coding, reasoning, safety-conscious enterprise use | No native image/video generation, higher API cost | High (no ads, no military use) |

| Gemini (Google) | Google ecosystem users, Android, Search integration | Less competitive on coding benchmarks | Moderate |

ChatGPT (OpenAI) remains the biggest consumer platform by subscriber count, with the most brand recognition and the broadest integrations. GPT-5.4 is a formidable tool for professional research, legal work, and finance. But OpenAI's DoD partnership and its ad strategy have caused real trust damage with a segment of users.

Claude (Anthropic) is growing faster right now, and Claude Mythos will likely represent a genuine capability leap over anything currently available. Anthropic's principled stance on safety and military use is resonating with both individual consumers and enterprise buyers. Claude Code and Agent Teams give developers capabilities no other platform matches.

Gemini (Google) remains a strong contender for users inside the Google ecosystem, with deep integration into Search, Workspace, and Android. Its Nano Banana Pro image generation model is the strongest native multimodal offering.

The best AI model in 2026 is whichever one you learn to use most deeply, because the data is clear: it's not which AI you pick, it's how far you push it.

What This All Means for You

The AI landscape of 2026 is competitive, fast-moving, and increasingly consequential. A forgotten checkbox just revealed the most powerful AI model ever built. A skills gap is already separating those who use AI deeply from those who use it casually. And AI agents are beginning to automate entire categories of knowledge work.

Here's what you should do right now:

- Pick one AI tool and go deep. The data shows that power users who treat AI as a thought partner gain measurable advantages over casual users. Whether you choose Claude or ChatGPT, invest time in learning its advanced features

- Watch for Claude Mythos. When it launches publicly, it could reset the competitive landscape overnight. If you're in cybersecurity, start preparing your defenses now

- Start experimenting with AI agents. With 72% of Global 2000 companies already running agent systems in production, this isn't early-adopter territory anymore. It's table stakes

- Close the skills gap before it closes you out. If you're a manager, invest in custom AI training for your team. If you're an individual contributor, dedicate time each week to pushing your AI tool beyond simple prompts

If you're not paying close attention to the Claude vs ChatGPT vs Gemini race, and especially to what Claude Mythos represents, now is the time to start.

Sources: Anthropic Economic Impact Report (March 2026), Fortune, TechCrunch, The Decoder, Google Trends (March 2026)

FAQ

What is the difference between Claude and ChatGPT in 2026? Claude (built by Anthropic) leads in coding benchmarks, PhD-level reasoning, and safety-first policies including refusing military contracts. ChatGPT (built by OpenAI) leads in ecosystem breadth, native image/video generation, and real-world professional task benchmarks. Both cost $20/month for consumer subscriptions, but Claude's API pricing is higher at the Opus tier.

Is Claude better than ChatGPT for coding? On standard coding benchmarks like SWE-bench Verified, Claude Opus 4.6 scores 80.8% compared to GPT-5.4's 77.2%. However, GPT-5.4 outperforms Claude on harder coding problems (SWE-bench Pro: 57.7% vs ~45.9%). For complex, multi-file software engineering projects, most developers report Claude produces cleaner code and handles architectural decisions better.

What is Claude Mythos? Claude Mythos (internal codename: Capybara) is Anthropic's unreleased next-generation model, accidentally revealed through a CMS data leak on March 26, 2026. It sits above the current Opus tier as an entirely new model class, scoring "dramatically higher" than Claude Opus 4.6 on coding, reasoning, and cybersecurity benchmarks. Anthropic has confirmed it exists but hasn't announced a public release date.

Which AI model is best for business use in 2026? It depends on your use case. ChatGPT (GPT-5.4) is best for teams that need broad integrations, image generation, and professional research tools. Claude is best for engineering teams, safety-conscious enterprises, and organizations that need advanced coding and reasoning. Gemini is best for businesses already deep in the Google ecosystem. For custom AI automation that combines multiple models, working with a specialist agency often delivers better results than picking a single platform.

What is the AI skills gap? The AI skills gap refers to the growing divide between workers who use AI tools deeply (power users) and those who use them only for simple tasks. Anthropic's March 2026 economic report found that AI can theoretically handle 94% of computer and math tasks, but actual professional use covers only 33%. The gap creates measurable workplace advantages for power users and may amplify existing economic inequalities.

How much does Claude cost compared to ChatGPT? Both offer consumer subscriptions at $20/month. Claude Max ($200/month) includes unlimited Opus 4.6 with Agent Teams. ChatGPT Pro ($200/month) includes the enhanced GPT-5.4 Pro model. On the API side, GPT-5.4 is significantly cheaper: $2.50/$15 per million tokens (input/output) compared to Claude Opus 4.6 at $5/$25. Claude Sonnet 4.6 ($3/$15) is priced closer to GPT-5.4.

Will AI agents replace jobs in 2026? Not yet at scale, but the trend is accelerating. Approximately 72% of Global 2000 companies now operate AI agent systems in production. Anthropic's CEO has predicted AI could eliminate up to half of entry-level white-collar jobs within five years. The most immediate impact is in customer service, finance operations, and marketing automation, where AI agents are already handling tasks that previously required human workers.

What is the best AI model overall in 2026? As of March 2026, Claude Opus 4.6 and GPT-5.4 are the two leading models, each with distinct strengths. Claude Mythos, when released, is expected to surpass both. The honest answer is that the best AI model is whichever one you invest time in learning deeply. Power users consistently outperform casual users regardless of which platform they choose.