OpenClaw: The Open-Source AI Agent That Went Viral on GitHub (And What It Actually Does)

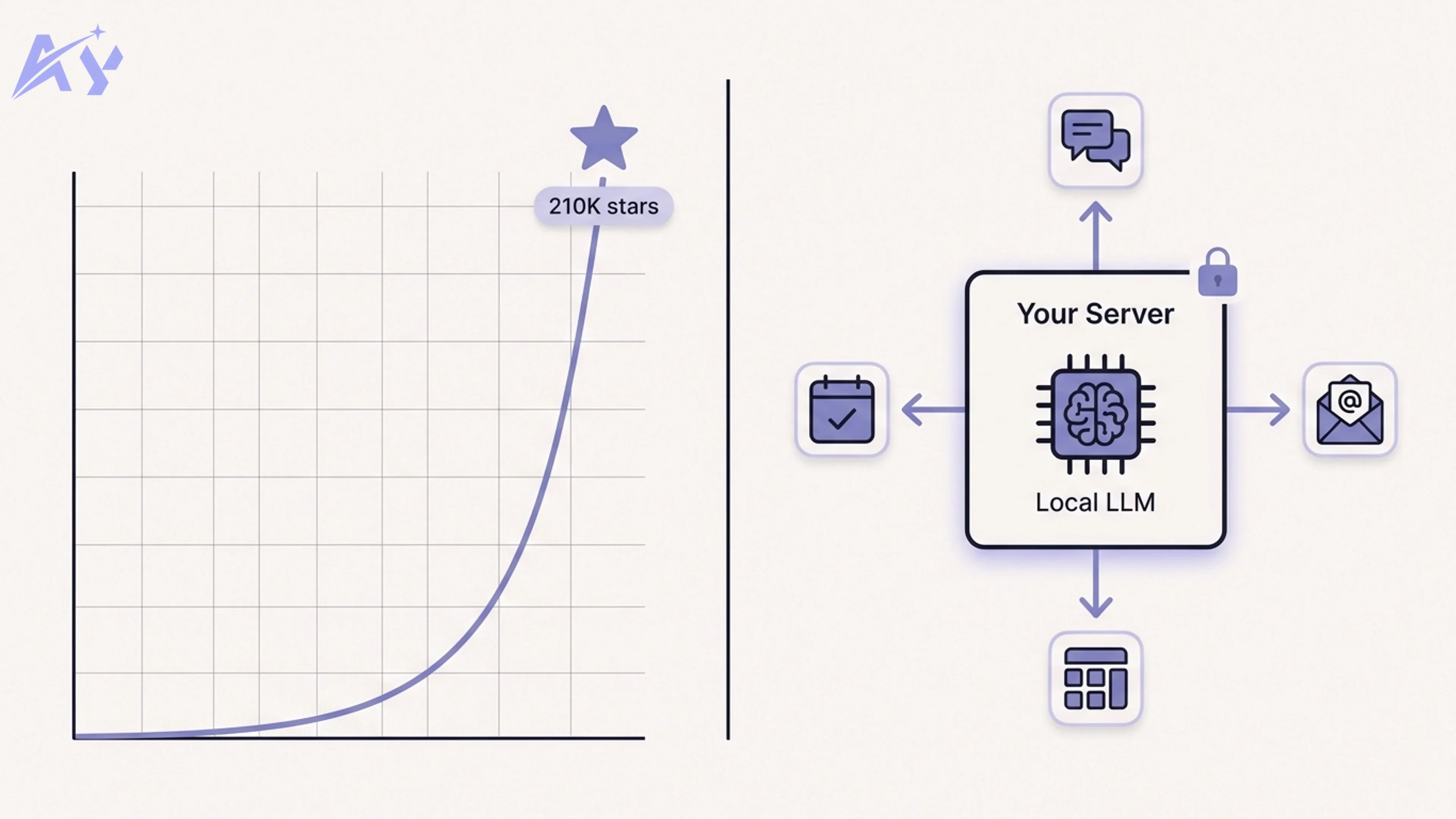

In early 2026, a GitHub repository nobody had heard of crossed 9,000 stars in a single weekend. A few weeks later it had 210,000. Sam Altman tweeted about it. Fortune ran a feature. The creator was hired by OpenAI. Then the project was handed to a foundation for independent governance.

The name: OpenClaw.

Most people who saw the headlines assumed it was another chatbot wrapper, another LangChain alternative, or another "build your AI assistant in 10 minutes" tutorial project. It is none of those things. OpenClaw is a self-hosted AI agent runtime designed specifically to connect to the apps your team already uses, without routing any data through external APIs or third-party infrastructure.

This article breaks down what OpenClaw actually is, why it went viral, how the architecture works, how it compares to commercial alternatives, and whether your team should build on it.

What Is OpenClaw?

OpenClaw is a self-hosted AI agent platform. The core premise is deceptively simple: instead of sending your business data to a hosted AI API to power your automations, you run the agent runtime on your own server, connect it to a local or OpenAI-compatible language model, and wire up pre-built integration connectors to the tools your team uses.

The current connector library covers more than 50 applications, including WhatsApp, Telegram, Slack, Discord, HubSpot, Salesforce, Gmail, Google Calendar, Notion, Airtable, Jira, Linear, and a growing set of generic REST and webhook endpoints.

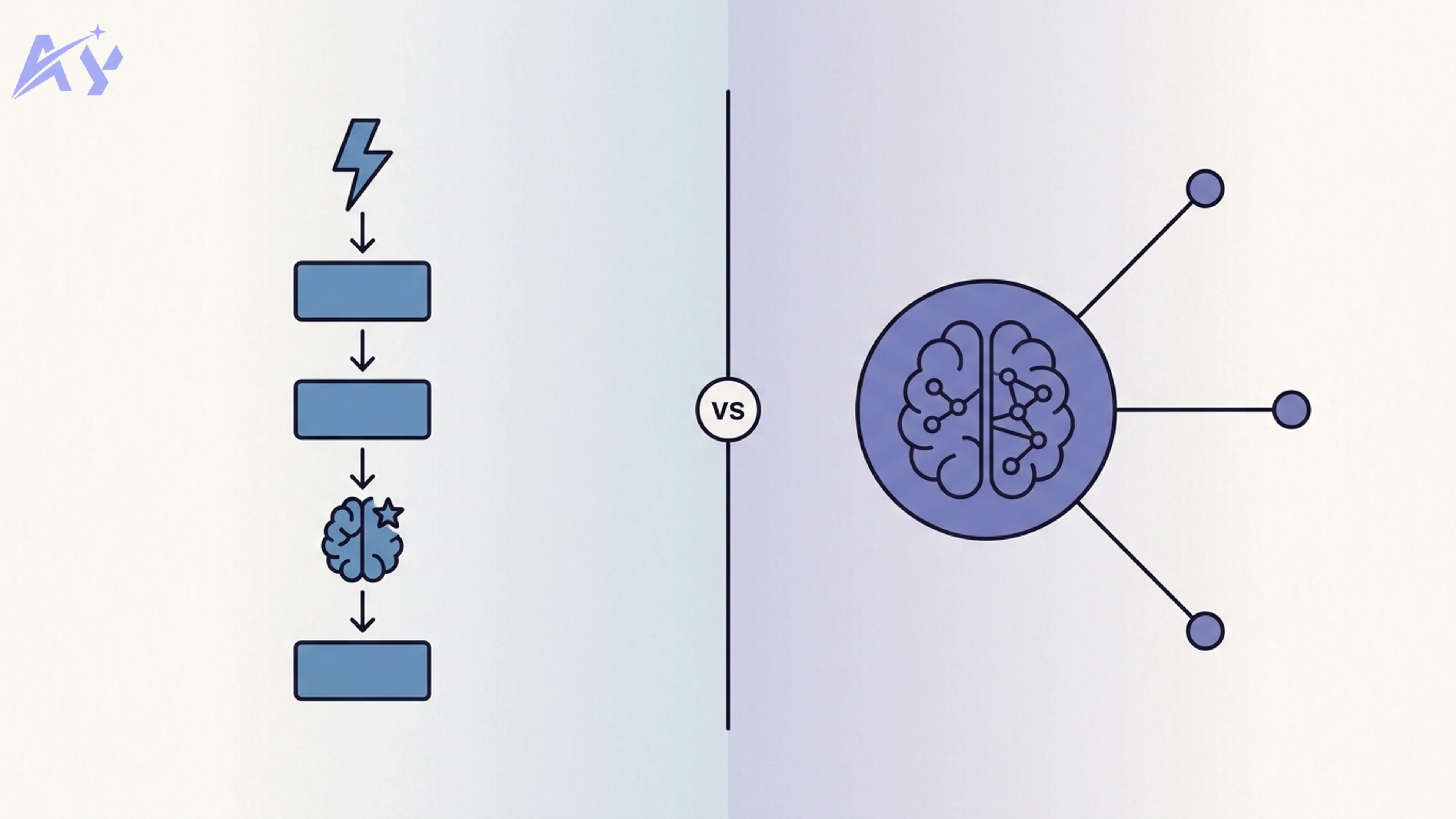

What makes OpenClaw distinct from a workflow automation tool like n8n or Make is the agent layer. Rather than executing a fixed sequence of steps, OpenClaw's runtime interprets natural-language instructions at runtime, selects which connectors to invoke, decides what data to pass between them, and handles error recovery without you defining every branch in advance. You describe the outcome. The agent figures out the path.

The "open" part matters for two reasons. First, you control the deployment: the runtime runs in Docker on your own infrastructure, your data never leaves your network unless you explicitly configure it to. Second, the source code is publicly available under a permissive open-source license, which means the community can audit it, extend it, and build on it without vendor permission.

For teams operating in regulated industries, for founders who want automation capabilities without committing to expensive SaaS contracts, and for developers who want to understand and customize every layer of their AI stack, that combination of properties is genuinely new.

Why Did OpenClaw Go Viral?

The timing matters as much as the product.

OpenClaw launched in January 2026, less than a month after a string of high-profile AI data incidents had made enterprise teams nervous about routing sensitive business data through third-party AI APIs. The privacy narrative was primed and ready. OpenClaw's pitch, "your data stays on your server," landed in a moment when that was exactly what a certain kind of technical buyer wanted to hear.

The Sam Altman effect was real but nuanced. Altman did not endorse OpenClaw in any formal sense. He posted a single tweet noting that the project was "doing something genuinely interesting with local agent orchestration." For a project that had 9,000 stars at the time, a single tweet from the CEO of OpenAI was enough to move the needle significantly. Within 48 hours the repository had crossed 40,000 stars and the GitHub trending page was dominated by discussions of it.

The open-source versus proprietary AI moment also played a role. The broader developer community had been watching the tension between open-source and closed AI models intensify through 2025. OpenClaw arrived as a concrete answer to the question of whether self-hosted AI could actually be useful in production. The fact that it supported Ollama for local models, while also working with OpenAI-compatible APIs, meant that developers could run it entirely without paying any token costs, or they could slot in a commercial model where quality mattered most.

Fortune's coverage amplified it beyond the developer audience. The headline, roughly paraphrased as "the startup nobody had heard of that broke GitHub's star counter," reached a broader business audience that had been looking for a concrete reason to think about self-hosted AI. The result was an unusual coalition of attention: developers, founders, enterprise IT buyers, and journalists, all arriving at the same repository within the same few weeks.

How OpenClaw Actually Works

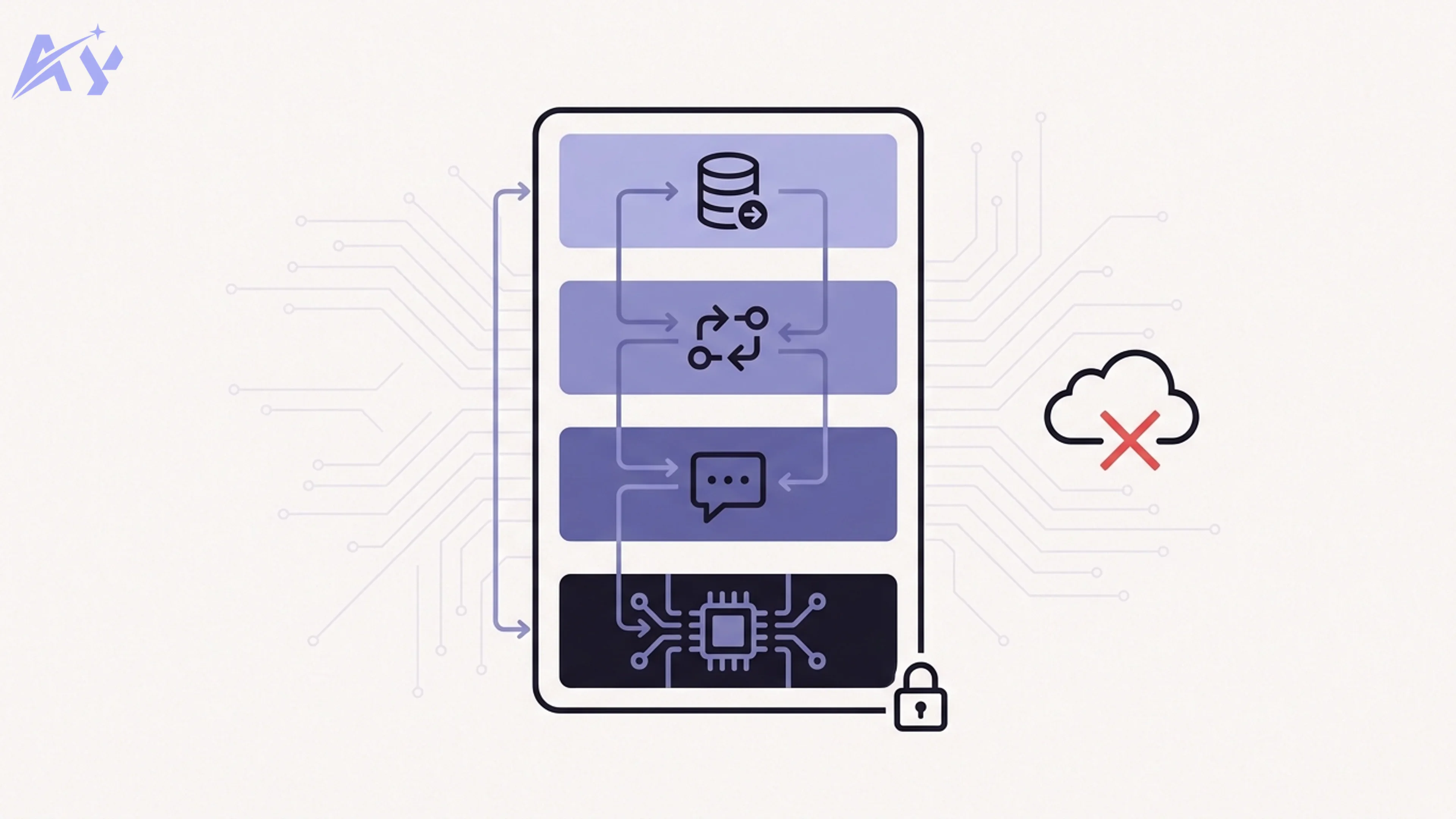

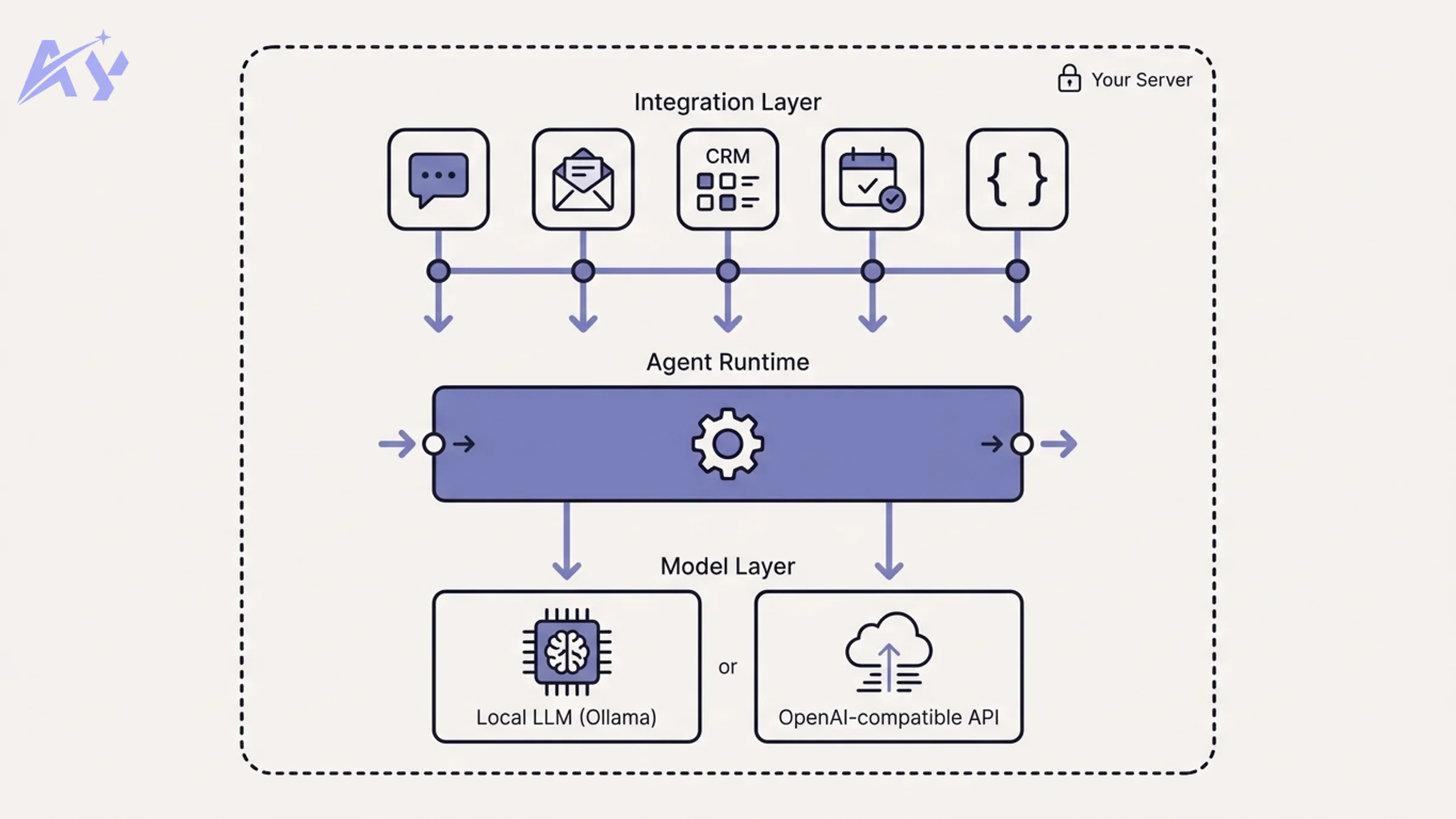

OpenClaw's architecture has three layers.

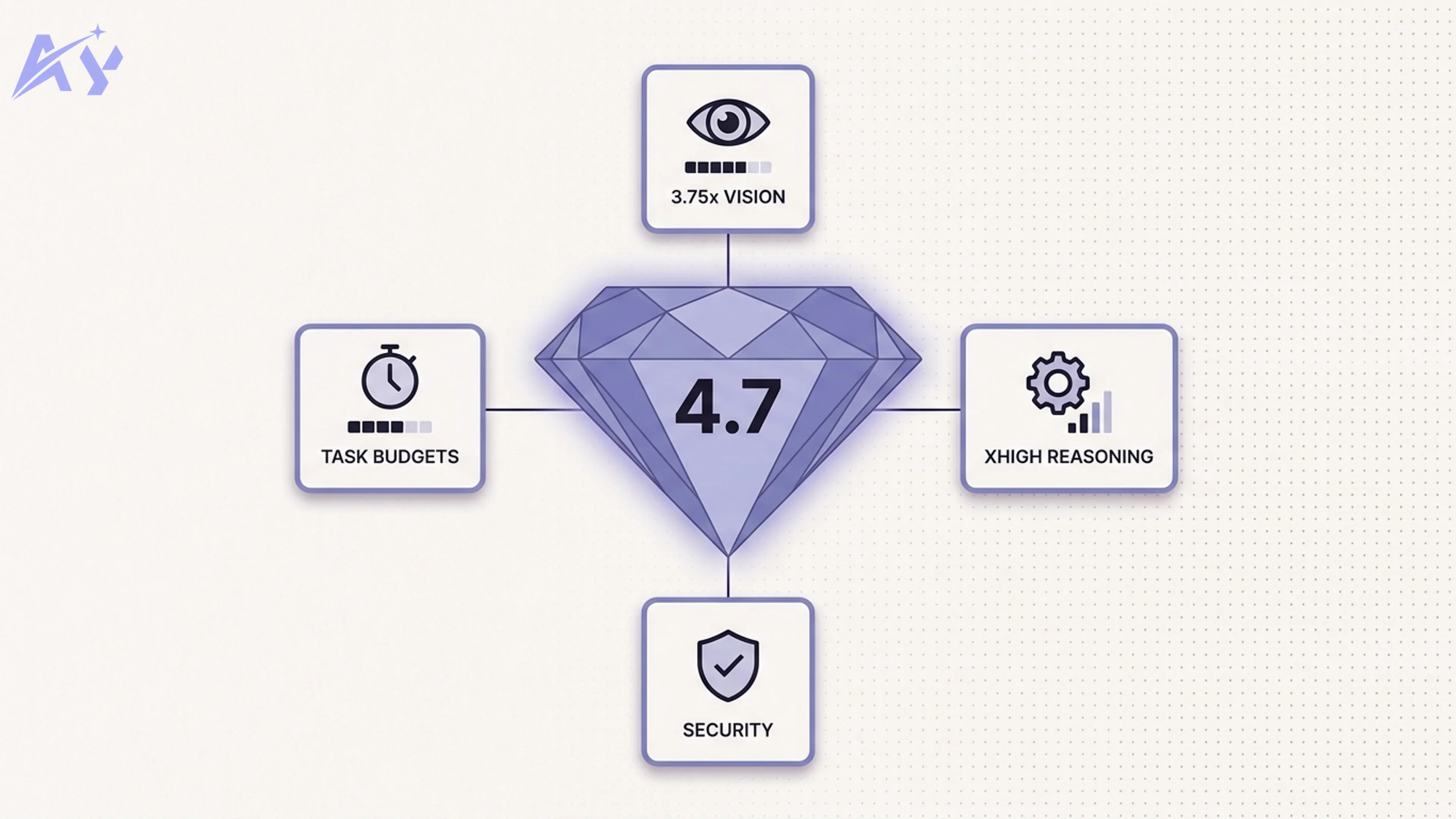

The model layer sits at the bottom. OpenClaw does not ship a language model. Instead, it uses an OpenAI-compatible API interface, which means it works with any model that exposes that API format. In practice, most teams use either Ollama running a local model (Mistral, Llama 3, Qwen, or similar), or they point OpenClaw at a commercial provider (OpenAI, Anthropic, Google) for tasks where model quality is the priority. The separation is intentional: you swap the model without changing anything else in the stack.

The agent runtime sits in the middle. This is the core of what OpenClaw actually does. When you send the agent a task (either programmatically via the API or through one of the chat interface connectors), the runtime breaks the task into sub-steps, selects which connectors to call, sequences the calls, passes outputs between them, and handles failures. The runtime maintains a short-term working memory of the current task context, which is what allows it to chain multiple tool calls coherently rather than treating each step as an isolated action.

The integration layer sits at the top. Each connector is a small, standardized module that wraps a specific third-party API. Connectors handle authentication (OAuth, API keys, or custom flows), request formatting, response parsing, and error normalization. Because each connector exposes a consistent interface to the runtime, adding a new connector does not require changes to the runtime itself. The community has been active in contributing connectors, and the foundation governance model (more on that below) includes a connector registry with quality standards.

The entire stack runs inside Docker. A default deployment requires roughly 2 GB of RAM for the OpenClaw runtime itself, plus whatever your chosen language model requires. For teams running a quantized local model via Ollama, a 16 GB server is sufficient for most use cases. For teams using a commercial API endpoint, the OpenClaw runtime itself is lightweight.

The agent is not magic. It makes mistakes, it can misinterpret ambiguous instructions, and it works better with well-structured tasks than with open-ended creative prompts. The project documentation is honest about this: OpenClaw is optimized for structured business process automation, not general-purpose reasoning.

OpenClaw vs Commercial AI Agents

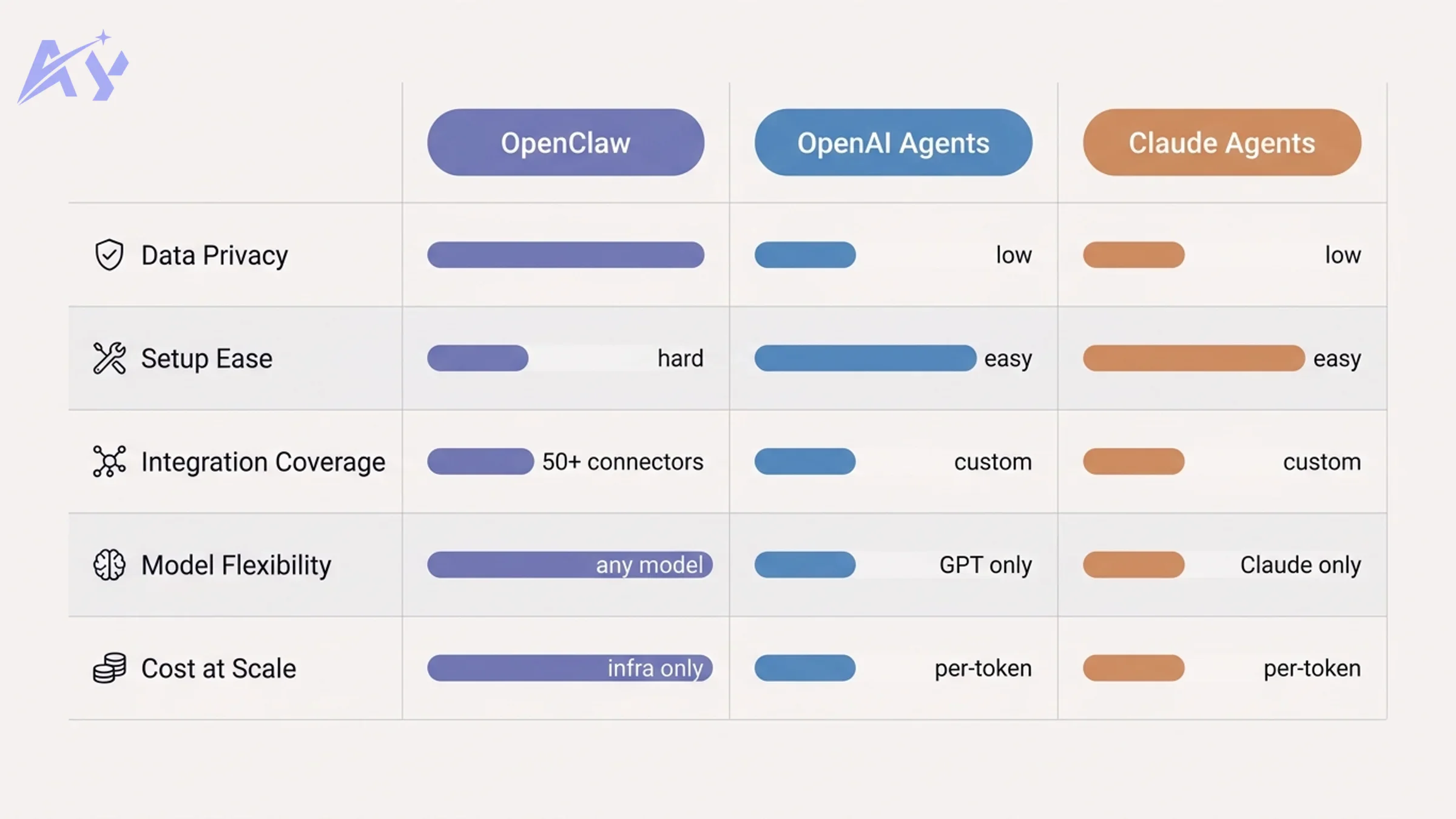

How does OpenClaw compare to the managed AI agent products from OpenAI and Anthropic? The honest answer is: it depends entirely on which dimensions matter most to your team.

| Dimension | OpenClaw | OpenAI Agents | Claude Agents |

|---|---|---|---|

| Data Privacy | Full: data stays on your server | Data routed through OpenAI infrastructure | Data routed through Anthropic infrastructure |

| Setup Complexity | High: Docker, model config, connector setup | Low: API key, minimal config | Low: API key, minimal config |

| Integration Coverage | 50+ pre-built connectors, community-extensible | Developer-defined via function calling | Developer-defined via tool use |

| Model Flexibility | Any OpenAI-compatible model (local or commercial) | GPT-4o family only | Claude family only |

| Cost at Scale | Infrastructure cost only, no per-token SaaS fee | Per-token pricing, can be significant at volume | Per-token pricing, can be significant at volume |

| SLA and Support | Community support, no managed SLA | Enterprise SLA available | Enterprise SLA available |

| Output Quality | Depends on the model you choose | GPT-4o-class reasoning | Claude-class reasoning and instruction following |

| Compliance Controls | You control the audit trail entirely | OpenAI compliance certifications | Anthropic compliance certifications |

The clearest advantage OpenClaw has over commercial agents is in the data privacy and cost columns. For a team running 500,000 agent-driven operations per month, the difference between paying per-token and paying for your own server time is not trivial.

The clearest disadvantage is setup complexity and the lack of a managed SLA. If something breaks in your OpenClaw deployment, the resolution path is your engineering team, the GitHub issues page, and the community Discord, not a support ticket with a contractual response time.

Model flexibility is genuinely interesting. Because OpenClaw is model-agnostic, you can run different models for different tasks: a fast, cheap local model for routine data lookups, a more capable commercial model for tasks where the quality of the output matters to a downstream customer or decision. OpenAI Agents and Claude Agents lock you into their respective model families.

Who Should Use OpenClaw?

OpenClaw is a strong fit for four kinds of teams.

Regulated industries. Healthcare, finance, legal, and government teams often cannot route patient data, financial records, or privileged communications through external APIs, even with contractual data processing agreements in place. OpenClaw's self-hosted model eliminates the data residency problem at the architecture level rather than the contract level.

Cost-sensitive teams with high automation volume. If your team runs a large number of routine automations, per-token pricing from commercial providers adds up quickly. OpenClaw's model, where you pay for infrastructure rather than tokens, is often significantly cheaper at scale. The break-even point depends on your volume and the models you use, but for most teams running more than a few hundred thousand operations per month, self-hosting is worth the engineering investment.

Developers who want control over the full stack. OpenClaw is open source. You can read every line of the connector code, modify the runtime behavior, add custom connectors for internal tools, and extend the agent's capabilities in ways that are simply not possible with a black-box API. If your team values understanding and controlling the systems you build on, that matters.

Privacy-first organizations. Some teams, particularly those handling customer data in Europe or in jurisdictions with strict data localization requirements, need to be able to demonstrate that data never left their own infrastructure. OpenClaw makes that demonstrable.

If you want to explore AI agent development and need a framework that you can fully customize and audit, OpenClaw deserves serious evaluation.

Who Should Not Use OpenClaw?

OpenClaw is the wrong choice for three kinds of teams.

Teams without DevOps capacity. Running OpenClaw in production requires someone who can manage Docker containers, handle infrastructure updates, monitor resource usage, debug connector failures, and keep the runtime patched. If your team does not have that capacity in-house, the operational overhead will quickly outweigh the cost or privacy benefits.

Teams where model quality is non-negotiable. If your use case requires the best available reasoning, instruction following, or code generation, and you need it consistently, OpenClaw with a local model is not a substitute for GPT-4o or Claude. The gap in model capability is real. OpenClaw with a commercial API endpoint narrows that gap, but you lose some of the cost advantage.

Teams that need a managed SLA. If your automation pipeline is customer-facing or business-critical, you need a predictable escalation path when something breaks. OpenClaw does not offer that. You are responsible for your own uptime, your own monitoring, and your own incident response.

That said, self-hosting is not all-or-nothing. Many teams run OpenClaw for internal automations where downtime is tolerable, while using commercial agents for customer-facing workflows. If you want a realistic picture of what self-hosted AI maintenance actually involves, it is worth being honest about the operational commitment before you start.

Foundation Governance: What It Means for Long-Term Trust

The creator of OpenClaw joined OpenAI shortly after the project went viral. This raised an obvious question: what happens to an open-source project when the person who built it joins one of the commercial competitors?

The answer, in OpenClaw's case, is the OpenClaw Foundation. Before joining OpenAI, the creator transferred governance of the project to an independent foundation. The foundation controls the project roadmap, the connector registry quality standards, the release process, and the trademark. OpenAI has no special governance rights over OpenClaw.

This is not unprecedented in open source. The Apache Software Foundation, the Linux Foundation, and similar organizations exist precisely to decouple project governance from any single company's interests. The OpenClaw Foundation follows a similar model.

What foundation governance means in practice: no single company can close-source the project, change the license in a way that disadvantages the community, or redirect the roadmap to serve a commercial interest. The foundation's bylaws require multi-stakeholder governance, public roadmap discussions, and transparent decision-making.

For teams evaluating whether to build critical infrastructure on top of OpenClaw, foundation governance is a meaningful risk reduction. It does not guarantee the project will be maintained indefinitely, but it does significantly reduce the risk of a single-company decision ending the project or changing its terms.

If you are evaluating OpenClaw alongside managed commercial options for enterprise use, it is worth reading the OpenClaw and NemoClaw enterprise setup guidance, which covers the additional tooling and support structures available for production deployments.

Getting Started with OpenClaw

OpenClaw's setup involves four steps. This is not a full tutorial, but here is the high-level overview.

Step 1: Run the Docker container. The OpenClaw runtime is distributed as a Docker image. A basic docker-compose.yml gets the runtime, a PostgreSQL database for state persistence, and a Redis instance for queue management running in under 10 minutes on most machines.

Step 2: Connect a model. Point the runtime at your model endpoint. If you are using Ollama, install it locally, pull a model, and point OpenClaw at the Ollama API. If you are using a commercial provider, add your API key to the environment configuration.

Step 3: Configure your first connector. The connector configuration is YAML-based. Each connector has a configuration file where you provide your authentication credentials and any per-connector settings. The documentation covers each of the 50+ connectors individually.

Step 4: Write your first agent task. OpenClaw accepts tasks via a REST API or through the built-in chat interface. Start with a simple, well-defined task (for example: "check the Slack #support channel for messages tagged [urgent] and create a Jira ticket for each one") and iterate from there.

If your team wants support for a more complex OpenClaw deployment, custom workflow automation services are available to handle the integration design and configuration.

Key Takeaways

OpenClaw is a real product solving a real problem. It is not a chatbot wrapper or a tutorial project. It is a self-hosted AI agent runtime with a growing ecosystem of pre-built connectors for business applications.

It went viral because the timing was right: a privacy-conscious developer audience, a commercial AI data incident backdrop, and a single influential tweet combined to amplify a genuinely novel piece of open-source software to an audience far larger than the project's initial niche.

Foundation governance reduces the long-term risk of building on it. The creator joining OpenAI is less alarming than it sounds, because the project is no longer under the creator's control.

The honest trade-off: OpenClaw gives you data privacy, cost efficiency, model flexibility, and full codebase auditability. In exchange, it asks for DevOps capacity, setup effort, and tolerance for community-paced maintenance rather than a managed SLA.

For regulated industries, cost-sensitive high-volume teams, and developers who value control, it is worth serious evaluation. For teams that need managed reliability or that do not have the operational capacity to run their own infrastructure, the commercial alternatives remain the pragmatic choice.

If you want to explore whether OpenClaw fits your automation use case, reach out to discuss.

FAQ

Is OpenClaw free?

The software itself is free and open-source. You pay for whatever infrastructure you run it on (your own servers or a cloud provider) and for any language model API costs if you use a commercial endpoint. There is no per-seat or per-operation fee from OpenClaw itself.

Is OpenClaw safe to use in production?

It depends on your definition of "safe." The code is open-source and can be audited. The runtime has been deployed in production by a growing number of teams since early 2026. However, there is no managed SLA, no official support contract, and no commercial vendor responsible for uptime. For internal automations with moderate criticality, many teams consider it production-ready. For customer-facing, revenue-critical workflows, most teams add additional reliability layers or use it alongside commercial tooling.

Does OpenClaw work with ChatGPT?

OpenClaw works with any model that exposes an OpenAI-compatible API, which includes OpenAI's GPT models. You can point OpenClaw at OpenAI's API using your API key, and it will use GPT-4o (or whatever model you specify) as its reasoning engine. In this configuration, your agent reasoning data does go through OpenAI's infrastructure, though your connector data (what the agent sends to your Slack, Jira, CRM, etc.) still flows from your own server.

What is the OpenClaw Foundation?

The OpenClaw Foundation is the independent governance body that now controls the OpenClaw project. It was established before the project's creator joined OpenAI. The foundation controls the project roadmap, connector registry standards, release process, and trademark. It operates on a multi-stakeholder governance model, meaning no single company (including OpenAI) has special governance rights over the project.

How does OpenClaw compare to n8n?

n8n is a workflow automation tool that executes predefined sequences of steps. OpenClaw is an AI agent runtime that interprets natural-language instructions and decides which steps to take at runtime. They solve different problems. Many teams use both: n8n for deterministic, well-defined workflows where predictability matters, and OpenClaw for flexible, instruction-driven automations where the task varies and you want the agent to handle the routing decisions.

Can I use OpenClaw with a fully local model, with no data leaving my network?

Yes. If you configure OpenClaw with Ollama and a locally hosted model (Llama 3, Mistral, Qwen, or similar), and your integration connectors are connecting to internal systems (your own CRM, internal Slack workspace, self-hosted Jira), no data needs to leave your network at any point in the pipeline. This is one of the primary use cases the project was designed for.

What connectors are available?

As of early 2026, the official connector library covers more than 50 applications including WhatsApp, Telegram, Slack, Discord, HubSpot, Salesforce, Gmail, Google Calendar, Google Drive, Notion, Airtable, Jira, Linear, Zendesk, Intercom, GitHub, GitLab, and a generic REST connector for custom endpoints. The community connector registry (managed by the OpenClaw Foundation) adds additional connectors contributed by the broader community.

How does the NemoClaw enterprise variant differ?

NemoClaw is a distribution of OpenClaw maintained specifically for enterprise deployments. It adds enterprise-specific features including role-based access controls, audit log exports, high-availability clustering, and optional commercial support contracts. It is built on top of the open-source OpenClaw core and is governed by the same foundation. Teams that need enterprise-grade reliability and support typically use NemoClaw rather than the community OpenClaw distribution. See the OpenClaw and NemoClaw enterprise setup page for details.

Sources

- OpenClaw GitHub repository star count data, March 2026

- Sam Altman tweet, January 2026 (cited in GitHub discussions and community posts)

- Fortune: "The GitHub Repository That Broke the Star Counter," February 2026

- OpenClaw Foundation governance documentation, openclaw.foundation