Our engineers stopped debating which AI tools to use. We just showed up to work and the stack converged on the same six tools, every project, every time. After building 200+ AI systems across US and EU clients, here is exactly what an AI-native team uses in 2026, and why each tool earned its place.

This is not a vendor roundup. It is a working configuration. Every tool listed here is in active production use at AY Automate. We will cover how each one fits into what we call intent coding: the practice of specifying outcomes precisely enough that the stack executes autonomously, with engineers focused on judgment rather than implementation.

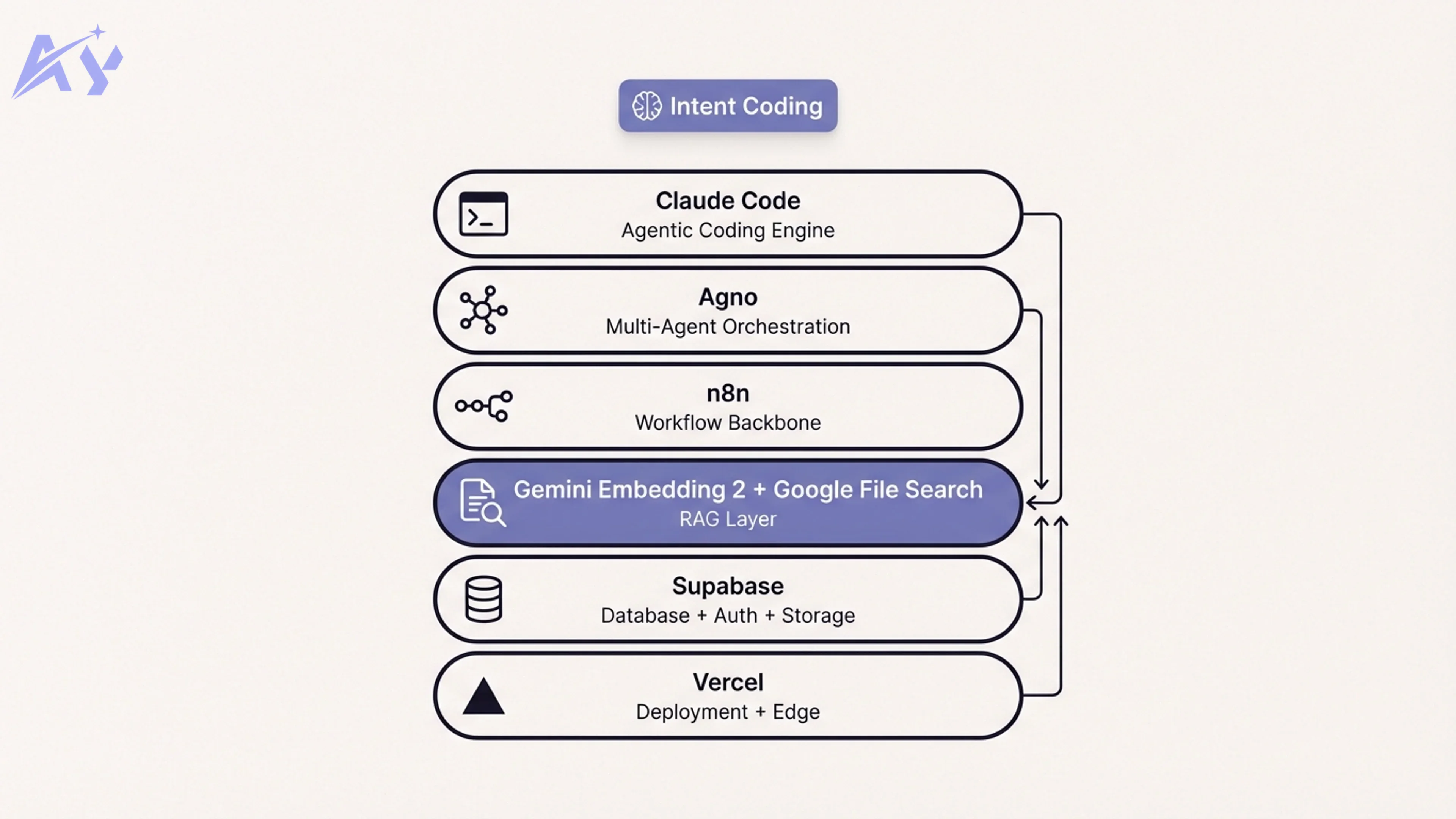

What "Intent Coding" Actually Means

The term "vibe coding" gets thrown around to mean prompting loosely and hoping for the best. Intent coding is the opposite.

Intent coding means writing specifications so precise that an AI agent can execute them without ambiguity. No handholding, no mid-stream corrections, no "fix this and also try that." You define the outcome, the constraints, the success criteria, and the integration points. Then the stack runs.

The difference in output quality is not marginal. It is structural. When engineers on our team write tight intent specs, they consistently produce production-grade builds 3-4x faster than teams relying on traditional implementation cycles. That is not a benchmark claim. That is the observed output ratio that our clients pay for.

The tools below are what make that ratio possible.

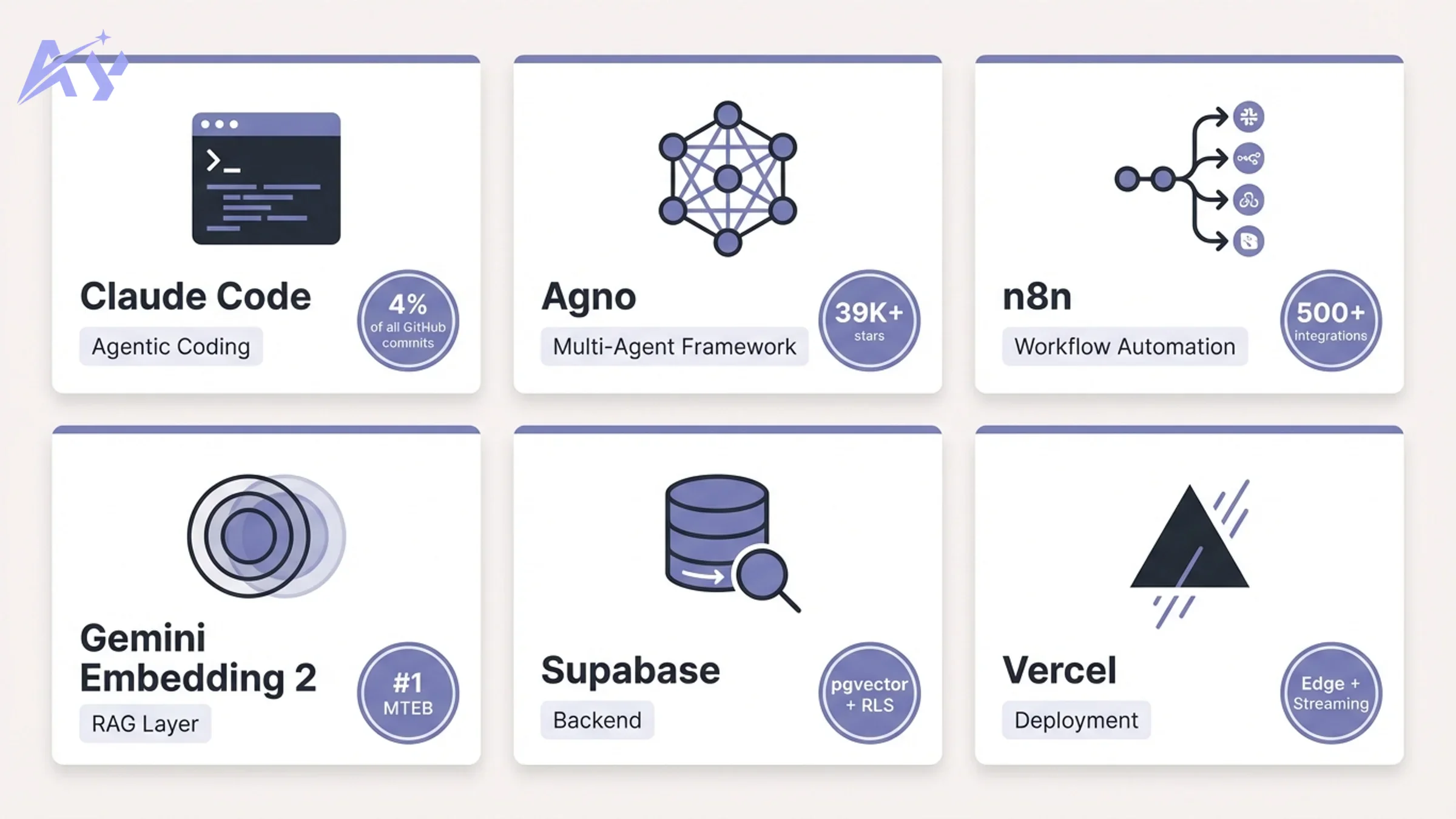

Claude Code: The Agentic Coding Engine

Claude Code is the foundation of how our engineers interact with codebases. It is not a tab-complete tool. It is a terminal-resident agent that reads your full codebase, plans multi-file changes, executes them, runs tests, and iterates on failures — without you touching the keyboard.

The capabilities that matter most in production:

- Multi-agent orchestration: Claude Code can spin up parallel sub-agents for different parts of a task. A lead agent coordinates, assigns subtasks, and merges results. A migration that would take a mid-level engineer two weeks can close in under 48 hours.

- Codebase-level reasoning: It understands relationships across hundreds of files, not just the file you have open. It tracks how a schema change ripples into API handlers, validation logic, and frontend types simultaneously.

- Skills system (SKILL.md): Custom playbooks extend what the agent knows how to do. We author skills for every repeating pattern: n8n workflow deployment, Prisma migration sequences, RAG pipeline scaffolding. Once a skill exists, any engineer on the team can invoke it without knowing the internals.

Claude Code is now authoring roughly 4% of all commits on GitHub. Stripe deployed it across 1,370 engineers and a team completed a 10,000-line Scala-to-Java migration in four days. Ramp cut incident investigation time by 80% after integrating it into their workflow.

At AY Automate, Claude Code is the interface through which every other tool in this stack gets configured, debugged, and shipped. Our AI code security practice is built on top of it: we use it to audit the agent-generated code it produces, closing the loop on quality before anything reaches production.

Agno: The Multi-Agent Orchestration Layer

Once you need more than one agent doing different things in parallel, you need a framework. Agno is what we use.

Agno is an open-source Python framework built for production multi-agent systems. It has three integrated layers that map directly to what a real deployment needs:

| Layer | What it does | Why it matters |

|---|---|---|

| Python SDK | Build agents, teams, and workflows | Clean, composable, Pythonic API |

| AgentOS runtime | Stateless FastAPI deployment | Horizontal scaling, no session affinity needed |

| Control plane UI | Monitor and manage agents | Visibility into production runs |

The Teams and Workflows abstractions are what make Agno production-ready rather than just a demo framework. A Team lets you define specialized agents (researcher, analyst, writer, QA) that collaborate on a task. A Workflow provides the execution pattern: sequential, parallel, or conditional.

In practice, this means we can build a client-facing AI system where one agent handles document retrieval, a second handles classification, a third drafts responses, and a fourth validates against business rules — all coordinated, all traceable, all scalable. AgentOS transforms an agent into a production API in roughly 20 lines of code.

With 39,000+ GitHub stars and daily commits, Agno is the fastest-growing AI agent framework in active production use. Our AI agent development work is built almost entirely on Agno for the orchestration layer.

n8n: The Workflow Backbone

n8n is where business logic lives. If Agno coordinates AI agents, n8n connects everything else: CRMs, databases, webhooks, communication platforms, data pipelines, and the AI agents themselves.

The 2.0 release in late 2025 introduced enterprise-grade security by default, a modernized AI Agent node with enhanced token management, and improved reliability at scale. The platform now supports 500+ integrations, self-hosted or cloud, with human-in-the-loop checkpoints at any stage.

What makes n8n the right choice over alternatives:

- Fair-code license: Self-hostable with full source visibility. No vendor lock-in on the automation backbone.

- Code escapes: When visual nodes hit their limits, you drop into JavaScript or Python inline. No wrapper abstraction forcing you to stay inside guardrails.

- Native AI Agent node: Connects directly to LLM providers, handles tool registration, manages conversation context. You can wire Claude, GPT-4, or a local Ollama model into any workflow step.

- Human approvals: Any workflow can pause and route to a human before proceeding. This matters for high-stakes operations where full automation is not appropriate.

Our custom workflow automation engagements almost always land on n8n as the integration layer. It is also the runtime that glues our Agno agent outputs into client-facing systems.

One pattern worth calling out: n8n handles the connective tissue between AI capabilities and business systems. Google's File Search API (covered next) indexes documents; Agno agents reason over them; n8n workflows deliver the results to Slack, trigger Salesforce updates, or push records into the client's data warehouse.

Google File Search + Gemini Embedding 2: The RAG Layer

Retrieval-Augmented Generation is only as good as the retrieval layer. Most RAG implementations fail not because the LLM is weak, but because the retrieval is noisy.

Google File Search is a fully managed RAG system built directly into the Gemini API. It handles chunking, embedding, indexing, and dynamic context injection automatically. You upload documents, it stores and indexes them, and it retrieves the most relevant passages at query time with automatic citations. Storage and query-time embedding generation are free; indexing costs $0.15 per million tokens.

The retrieval quality is powered by Gemini Embedding 2 (gemini-embedding-2-preview), which launched in March 2026. Here is why it is the most important embedding model release in years:

| Feature | Gemini Embedding 2 | Previous best |

|---|---|---|

| MTEB Multilingual rank | #1 | Runner-up |

| MTEB score margin | +5 percentage points | Baseline |

| Vector dimensions | 3,072 | Varies |

| Max input tokens | 8,192 | 512-4,096 |

| Modalities | Text, images, video, audio, PDFs | Text only |

| Architecture | Natively multimodal (Gemini) | Unimodal |

The multimodal dimension changes what RAG systems can do. A knowledge base is no longer limited to text documents. Product screenshots, instructional videos, audio recordings, and mixed-media PDFs all go into the same 3,072-dimensional vector space. One retrieval query can pull relevant context from across all of those formats simultaneously.

For enterprise clients, this eliminates the need to separate knowledge bases by content type and then merge results. One index, one query, full coverage.

Our RAG pipeline architecture service is built on this layer. Combining Google File Search's managed infrastructure with Gemini Embedding 2's multimodal retrieval gives clients production-grade knowledge systems without the operational overhead of managing a custom vector database.

Supabase: Database, Auth, and Storage

Every client project needs a database layer, an authentication system, and file storage. Supabase provides all three in a single platform with a clean API and Postgres at the core.

For AI-native systems specifically, Supabase matters because:

- pgvector extension: Native vector storage inside Postgres. When you need a lightweight vector index alongside relational data, you do not need to run a separate service.

- Row-level security: Fine-grained access control defined in SQL. Multi-tenant AI systems with per-user data isolation are straightforward.

- Real-time subscriptions: Push changes to connected clients the moment they happen. Useful for AI systems where results arrive asynchronously.

- Storage: Direct file uploads with CDN delivery. We use it for document storage in RAG systems before indexing into Google File Search.

Supabase removes the infrastructure decisions that slow down early builds. Our SaaS MVP development work uses it as the default backend for nearly every new product.

Vercel: Deployment and Edge

The deployment layer in an AI-native stack needs to handle two distinct patterns: standard server-side rendering and long-running streaming requests from AI models.

Vercel handles both. Next.js App Router deployments go to Vercel's edge network by default, with automatic optimization, caching, and global distribution. For AI streaming endpoints, Vercel Functions support streaming responses with no timeout pressure.

The integration between Vercel and the rest of this stack is tight. Supabase environment variables connect in one step. n8n webhooks route through Vercel API routes cleanly. Claude Code can scaffold and deploy a Next.js app directly from the terminal without leaving the development environment.

For clients who need staff to run these systems internally after delivery, we offer AI staff augmentation: engineers trained on this exact stack who embed in client teams and maintain production systems.

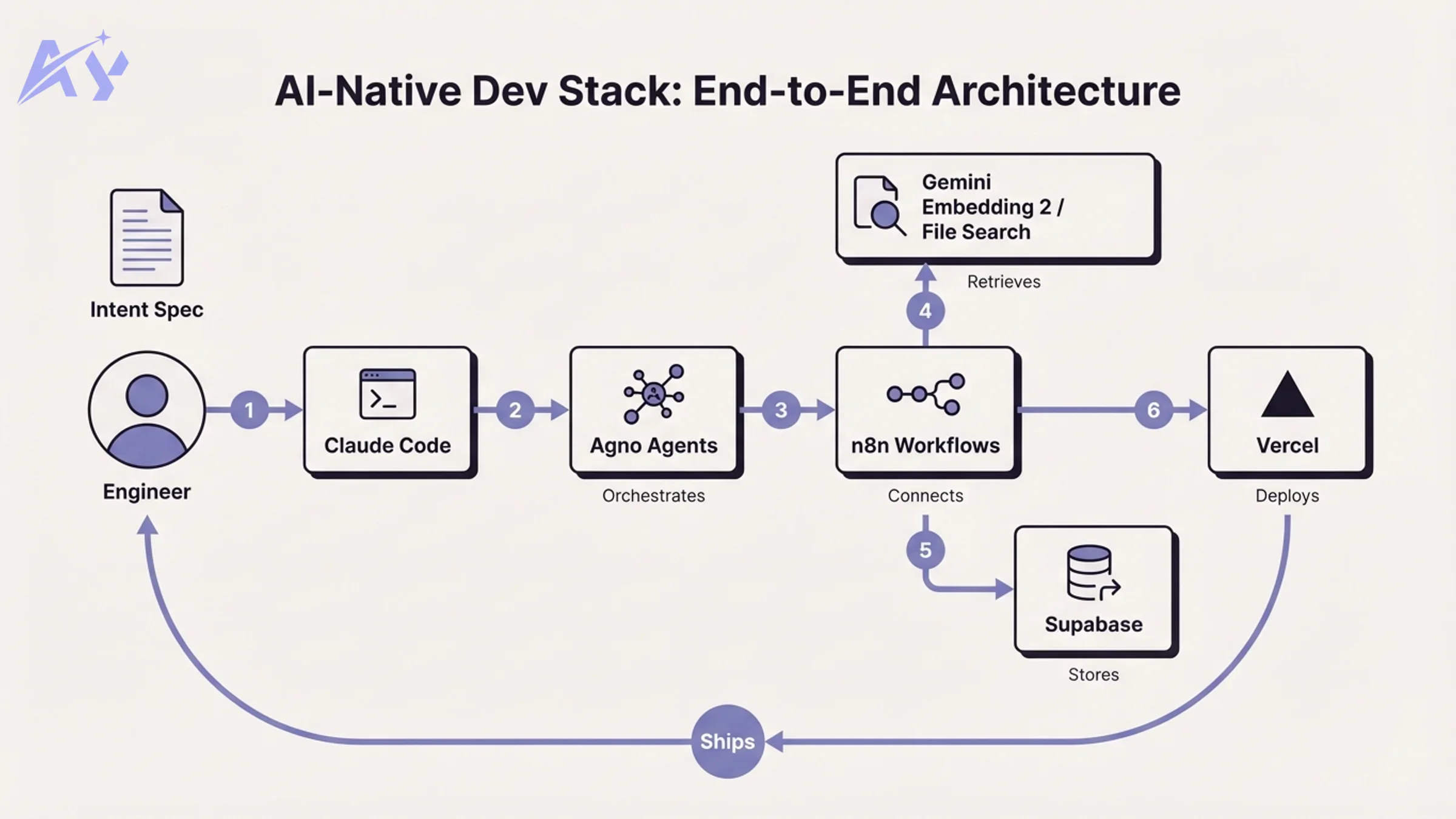

How the Stack Connects

Here is how these tools interact on a typical client engagement:

- Claude Code scaffolds the application architecture, writes API routes, and generates tests from intent specs

- Agno powers the AI agent layer: document Q&A, multi-step reasoning, automated decision workflows

- n8n connects the agent outputs to business systems: CRM updates, notifications, reporting pipelines

- Google File Search + Gemini Embedding 2 indexes the client's knowledge base and powers retrieval inside Agno agents

- Supabase stores relational data, handles user authentication, and serves as the document staging layer before indexing

- Vercel deploys the frontend and API layer with zero-configuration CI/CD

No black boxes. Every layer is observable, every handoff is traceable. That is a deliberate design constraint, not an accident of tooling choices.

Key Takeaways for Engineering Leaders

- Intent coding is a methodology, not a prompt style. Teams that write precise specifications into Claude Code get fundamentally different output than teams that prompt conversationally. The tooling rewards rigor.

- The RAG layer is the hardest part to get right. Google File Search + Gemini Embedding 2 is the most mature managed option available in 2026. Use it before building a custom vector pipeline.

- n8n is underused in AI stacks. Most teams treat it as a Zapier replacement. It is actually the production-grade orchestration layer that connects AI outputs to business operations.

- Agno removes the "framework from scratch" problem. Multi-agent systems built without a framework are expensive to maintain. Agno gives you a production runtime before you write the first agent.

- Security and quality gates belong in the stack, not in post-production review. We run AI code security audits as part of delivery, not as an afterthought.

If you are evaluating this stack for your team or need an engineering partner who already runs it in production, book a discovery call.

Sources: Claude Code (Anthropic), Agno Framework (agno.com), n8n AI Workflow Automation, Gemini Embedding 2 (Google AI for Developers), Google File Search Tool, Gemini Embedding 2 MTEB Benchmark

FAQ

What is Claude Code and how is it different from GitHub Copilot? Claude Code is a terminal-resident agentic coding assistant that reads your full codebase, plans multi-file changes, runs tests, and iterates autonomously. GitHub Copilot is an inline autocomplete tool. Claude Code operates at the task level; Copilot operates at the line level.

What is intent-driven coding? Intent coding is the practice of specifying outcomes, constraints, and success criteria precisely enough that an AI agent can execute a task without clarifying questions or mid-stream corrections.

What is Agno and why use it over LangChain or CrewAI? Agno is an open-source Python framework for building and deploying multi-agent AI systems. Compared to LangChain, it has a cleaner API and a production runtime (AgentOS) built in. Compared to CrewAI, it provides better horizontal scaling and native tracing.

What makes Gemini Embedding 2 different from other embedding models?

Gemini Embedding 2 (gemini-embedding-2-preview) is the first natively multimodal embedding model. It maps text, images, video, audio, and PDFs into a single 3,072-dimensional vector space. It ranked #1 on the MTEB multilingual benchmark in March 2026, outperforming the next-best model by more than 5 percentage points.

What is Google File Search and how does it simplify RAG? Google File Search is a fully managed RAG system inside the Gemini API. It handles document ingestion, chunking, embedding, indexing, and retrieval automatically. You do not need to configure a separate vector database or manage chunking strategies.

Can n8n handle AI agent workflows in production? Yes. The n8n 2.0 release introduced enterprise-grade security and a modernized AI Agent node with improved token management. n8n supports 500+ integrations and can coordinate multiple AI agents with human-in-the-loop approval steps.

Taha builds and ships custom AI agents and workflow automations for AY Automate clients across SaaS, finance, and professional services.