AI agents went from experiment to production reality in 2026. Inquiries for autonomous, multi-step AI systems have surged over 1,400% in the past year alone, and the GitHub repositories powering them are accumulating stars faster than any category in recent memory. The question is no longer whether to adopt agentic AI but which framework to build on.

The wrong choice locks you into a paradigm your team will fight for months. The right one gives you orchestration primitives, tool-calling, memory, and deployment patterns that match how your actual workflows run. In this guide, we compare the eight best open-source AI agent frameworks trending on GitHub right now, covering architecture, pricing, ideal use cases, and an honest take on each framework's limitations.

Best open-source AI agent frameworks: a brief overview

- OpenClaw: Best for self-hosted agents with broad integration coverage: a viral, privacy-first agent that connects 50+ apps without calling any external API.

- Agno: Best for lightweight, high-performance agents in production: a minimal Python framework that runs agents in under 2 microseconds with built-in memory, storage, and multimodal tool support.

- CrewAI: Best for multi-agent role-based orchestration: define agents as team members with distinct roles, goals, and tools, then let them collaborate on complex tasks.

- LangGraph: Best for stateful, graph-based agent workflows: build agents that can loop, branch, and pause at checkpoints without losing state between steps.

- AutoGen: Best for enterprise teams using Microsoft infrastructure: a research-backed framework for conversational multi-agent systems with deep Azure integration.

- Dify: Best for teams wanting a self-hostable LLM app platform: combines a visual workflow builder, RAG pipeline, and API layer into a single deployable service.

- RAGFlow: Best for document-heavy enterprise knowledge bases: a RAG engine with agentic retrieval, citation grounding, and support for messy real-world documents.

- Langflow: Best for no-code agent prototyping: a drag-and-drop visual builder on top of LangChain that compiles to production-ready Python.

| Tool name | Key strength | Pricing | Platforms |

|---|---|---|---|

| OpenClaw | Self-hosted AI agent with 50+ native integrations, no external API calls | Free, open-source; cloud plan TBA | Self-hosted, Docker, Web |

| Agno | Ultra-fast, lightweight Python agent framework with built-in memory and multimodal tools | Free, open-source; Agno Platform from $99/month | Python, API, Cloud |

| CrewAI | Role-based multi-agent orchestration with built-in collaboration primitives | Free, open-source; Enterprise plan available | Python, API, Cloud |

| LangGraph | Stateful graph-based agent workflows with checkpointing and human-in-the-loop | Free, open-source; LangSmith from $39/month | Python, JavaScript, Cloud |

| AutoGen | Microsoft-backed conversational multi-agent framework with Azure-native integrations | Free, open-source; Azure compute costs apply | Python, .NET, Azure |

| Dify | Self-hostable LLM app platform with visual workflow builder and RAG | Free, open-source; Cloud from $59/month | Self-hosted, Docker, Cloud, API |

| RAGFlow | Deep-document RAG engine with agentic retrieval and grounded citations | Free, open-source; Enterprise pricing on request | Self-hosted, Docker, API |

| Langflow | Visual drag-and-drop agent builder compiled to LangChain Python | Free, open-source; DataStax Cloud from $0.15/credit | Web, Self-hosted, API |

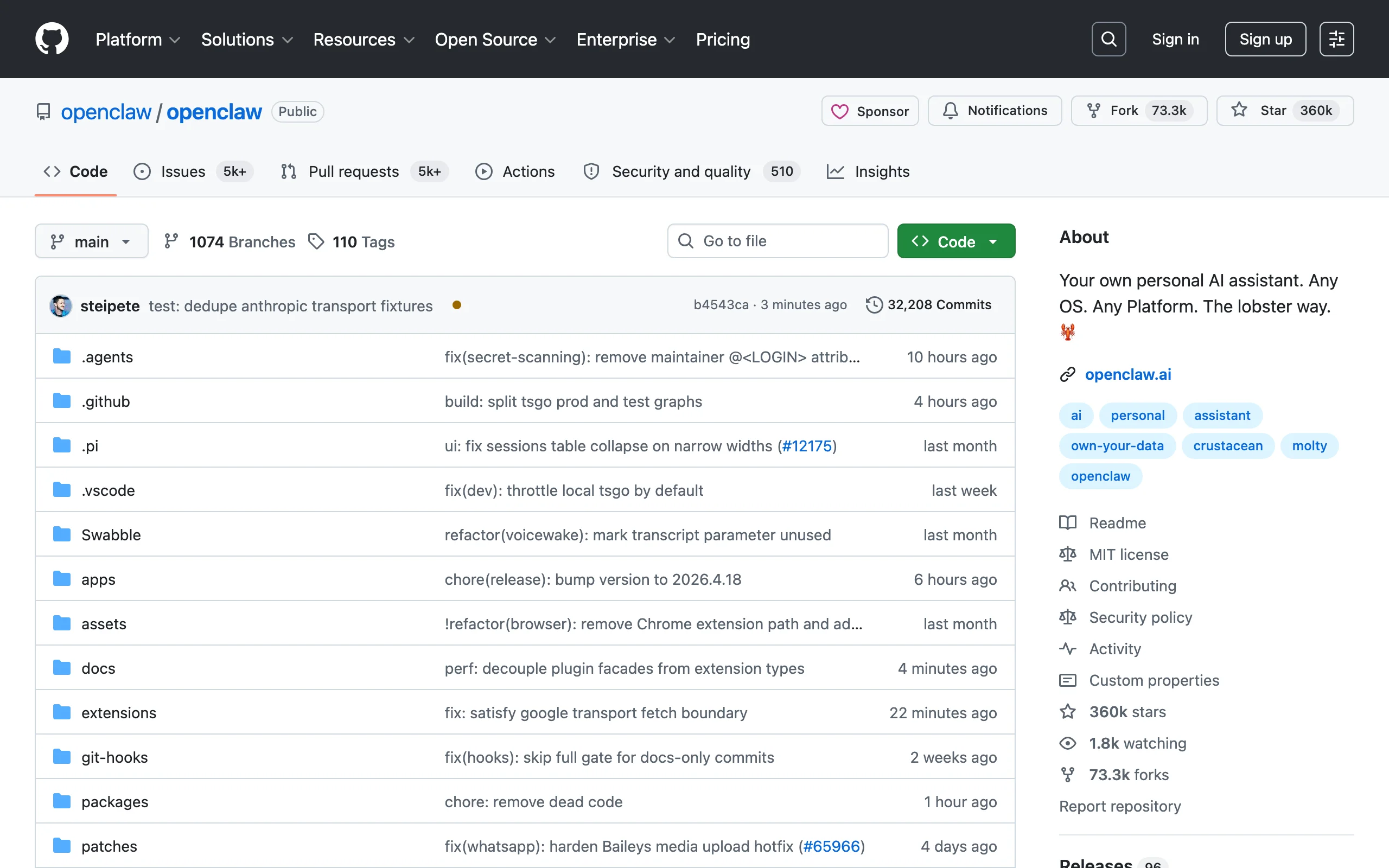

1. OpenClaw, best for self-hosted agents with broad integration coverage

OpenClaw is the breakout project of 2026. It went from roughly 9,000 stars to over 210,000 in a matter of weeks, earned a public endorsement from Sam Altman, and landed a Fortune feature within months of launch. The core premise is simple: an agentic AI assistant that runs entirely on your infrastructure and connects natively to over 50 tools including WhatsApp, Telegram, Slack, Discord, and most common business apps, without routing any data through external APIs.

For teams with data privacy requirements or those tired of paying token surcharges on cloud orchestration platforms, OpenClaw represents a credible alternative. Its integration library is pre-built rather than requiring custom glue code, which dramatically reduces the time from setup to first working agent.

Key features

- 50+ pre-built native integrations (messaging apps, CRMs, productivity tools)

- Fully self-hosted: all data stays on your infrastructure

- Compatible with local LLMs via Ollama and OpenAI-compatible APIs

- Docker-first deployment with one-command setup

- Active foundation governance after creator joined OpenAI

Best for

- Teams in regulated industries (legal, finance, healthcare) where data residency matters

- Developers who want ChatGPT-style UX over locally-run models like Llama or Mistral

- Startups replacing expensive API-dependent stacks with a self-hosted alternative

Pricing

- Free and open-source under MIT license

- Self-hosted: no usage fees, only your infrastructure costs

- Cloud-hosted plan in development; pricing not yet announced

Pros

- Integrations work out of the box rather than requiring connector code for each app

- Self-hosting eliminates per-token costs for high-volume use cases

- Community momentum means fast issue resolution and new connectors added weekly

Cons

- Cloud-managed option is not yet available, so teams without DevOps capacity need to manage their own deployment

- Still early: some integrations are community-maintained with varying quality

- No built-in agent memory store beyond conversation history; long-running task persistence requires custom work

2. Agno, best for lightweight high-performance agents in production

Agno (formerly Phidata) is built around a single engineering principle: agents should be fast, small, and composable. Where most frameworks accumulate abstractions, Agno strips them away. The result is an agent runtime that initializes in under 2 microseconds and uses roughly 3.75 KB of memory per agent instance, numbers that matter when you are running thousands of concurrent agents in production rather than a single prototype.

The framework ships with first-class support for memory (short-term, long-term via vector stores), structured tool definitions, multimodal inputs (text, images, audio, video), and a reasoning layer that lets agents think step-by-step before acting. Agno also supports multi-agent teams through a Coordinator pattern, making it a viable choice for complex orchestration without requiring a separate framework. Teams building production-grade AI agent development pipelines at scale often reach for Agno after hitting performance ceilings in heavier frameworks.

Key features

- Sub-2-microsecond agent initialization with minimal memory footprint

- Built-in memory layer: session memory, user memory, and long-term vector store support

- Multimodal tool support out of the box (text, images, audio, video)

- Multi-agent teams via Coordinator pattern with shared context

- Agno Platform: hosted observability, session replay, and deployment management

Best for

- Engineering teams building agents that run at high concurrency where per-agent overhead compounds

- Developers who want a typed, Pythonic API without heavyweight framework abstractions

- Teams that need multimodal agent capabilities without stitching together separate libraries

Pricing

- Free and open-source under the Mozilla Public License 2.0

- Agno Platform (hosted monitoring and deployment): from $99/month

- Enterprise: custom pricing with dedicated support

Pros

- Benchmark-leading initialization speed makes it practical for high-throughput production workloads

- Typed tool definitions reduce runtime errors and make agent capabilities inspectable

- Built-in observability via Agno Platform means you do not need to wire up a separate tracing stack

Cons

- Smaller community than CrewAI or LangGraph; fewer pre-built templates and third-party integrations

- The Coordinator multi-agent pattern is less expressive than LangGraph's graph model for complex branching workflows

- Agno Platform is proprietary; teams that want fully open-source observability need to integrate LangSmith or a custom solution

3. CrewAI, best for multi-agent role-based orchestration

CrewAI frames agent development around the metaphor of a workforce: you define agents with specific roles (Researcher, Writer, Analyst), assign them tools, and let them collaborate to complete a task. This role-based model maps naturally to how business processes actually work and makes it easier for non-ML engineers to reason about what the system is doing.

It ships with built-in task delegation, output passing between agents, and a process manager that handles sequential or hierarchical execution. The result is that you can prototype a three-agent research-and-summarize pipeline in under 50 lines of Python, and then extend it with custom tools, memory backends, and evaluation hooks as the workflow matures. CrewAI is one of the top choices for teams building AI agent development pipelines for sales, research, and content operations.

Key features

- Role-based agent definition with goals, backstory, and tool assignment

- Sequential and hierarchical process modes

- Native support for tool-calling with LangChain tools, custom functions, or web search

- Built-in memory: short-term, long-term, entity memory, and contextual storage

- CrewAI Flows for event-driven agentic pipelines

Best for

- Operations and RevOps teams automating multi-step research, outreach, or report generation

- Developers who want a readable, role-based abstraction rather than low-level graph construction

- Teams evaluating custom workflow automation before committing to a full build

Pricing

- Free and open-source under MIT license

- CrewAI Enterprise: custom pricing for compliance, dedicated support, and cloud deployment

Pros

- Role metaphor makes agent behavior easy to explain to non-technical stakeholders

- Built-in memory backends (including vector store support) reduce boilerplate for stateful agents

- Large ecosystem of pre-built crew templates for common use cases

Cons

- Hierarchical process mode can produce unpredictable delegation chains without careful role definition

- Less suited to agents that require complex branching logic or loops; LangGraph is stronger there

- Debugging multi-agent runs requires LangSmith or custom logging setup

4. LangGraph, best for stateful graph-based agent workflows

LangGraph is the answer to a real engineering problem: most agent frameworks run agents as linear chains, but real workflows branch, loop, wait for human input, and recover from errors. LangGraph models your agent as a directed graph where each node is a function and edges are conditional transitions. This gives you precise control over execution flow without writing custom orchestration logic from scratch.

Checkpointing is the standout feature. LangGraph can pause an agent mid-run, persist its state to a database, and resume it later, including after the process restarts. This makes it the right foundation for long-running, human-in-the-loop workflows where an agent needs approval before taking an irreversible action. It pairs naturally with a RAG pipeline architecture when agents need to retrieve and synthesize documents as part of a larger workflow.

Key features

- Graph-based execution model with conditional edges and loops

- Persistent state checkpointing with support for PostgreSQL, Redis, and SQLite backends

- Human-in-the-loop interrupts: pause the agent, get approval, then continue

- Streaming support for partial outputs and real-time progress

- First-class multi-agent subgraph composition

Best for

- Engineering teams building agents that need fine-grained control over branching and error recovery

- Use cases with compliance requirements where human approval must gate specific actions

- Teams already using LangChain who want to graduate from simple chains to stateful graphs

Pricing

- Free and open-source under MIT license

- LangSmith (optional observability platform): from $39/month per seat; free tier available

Pros

- Checkpointing solves the hardest problem in production agents: resuming after failure without starting over

- The graph model makes complex workflows visually inspectable and debuggable

- LangSmith integration provides production-grade tracing with minimal setup

Cons

- Higher learning curve than CrewAI: you need to understand graph primitives before you can write your first agent

- Verbose API: simple agents require more boilerplate than in higher-level frameworks

- Primarily Python; JavaScript support is available but less mature

5. AutoGen, best for enterprise teams on Microsoft infrastructure

AutoGen is Microsoft Research's entry into the agentic framework space, and it shows in the architecture: the framework is designed around conversational multi-agent systems where agents communicate with each other using natural language. An orchestrator agent assigns tasks, specialist agents execute them, and a critic agent checks outputs before they pass downstream.

What makes AutoGen compelling for enterprise teams is the Azure integration depth. If you are already running Azure OpenAI Service, Azure Cognitive Search, or Azure Functions, AutoGen slots in with minimal friction. The framework also ships with an AutoGen Studio visual builder for creating and testing multi-agent conversations without writing Python, which lowers the barrier for non-engineers to prototype. Teams evaluating AI strategy consulting often look to AutoGen when their existing Microsoft ecosystem is a hard constraint.

Key features

- Conversational multi-agent architecture with configurable agent roles

- AutoGen Studio: visual web interface for building and testing agent workflows

- Code execution sandbox for agents that write and run Python

- Native Azure OpenAI Service and Azure Functions integration

- AssistantAgent and UserProxyAgent abstractions for human-in-the-loop workflows

Best for

- Enterprises already standardized on Microsoft Azure with Azure OpenAI Service

- Research teams exploring multi-agent coordination paradigms

- Teams that need a visual agent builder without writing all configuration in code

Pricing

- Free and open-source under MIT license

- Compute costs billed through your Azure OpenAI or other LLM provider account

Pros

- Research-grade documentation with published benchmarks on task completion quality

- AutoGen Studio significantly reduces time to first working prototype for less technical users

- Code execution sandbox lets agents write and test code autonomously in a safe environment

Cons

- Tightly coupled to the conversational paradigm; workflows that are not naturally dialogue-shaped require workarounds

- Azure-first design means some integrations are awkward on non-Microsoft infrastructure

- Production deployment patterns are less documented than research use cases

6. Dify, best for self-hostable LLM application platforms

Dify sits at a different point in the spectrum than pure orchestration frameworks: it is a full LLM application platform that combines a visual workflow builder, a RAG pipeline, a model routing layer, and a REST API in a single deployable package. You can build a production chatbot with document retrieval, a multi-step automation workflow, or a text transformation API all within the same tool.

The self-hosted version runs on Docker Compose and supports every major model provider including OpenAI, Anthropic, Google, Mistral, and local Ollama models. For startups and mid-market companies that want the features of a cloud AI platform without the vendor lock-in or per-token premium, Dify is one of the most cost-effective paths to a working AI application. Its visual workflow editor makes it approachable for teams exploring custom n8n automation patterns who want to extend into AI-native workflows.

Key features

- Visual workflow builder with 40+ built-in nodes (LLM, retrieval, code, HTTP request, etc.)

- Built-in RAG pipeline with chunking, embedding, and retrieval configuration

- Multi-model routing: run different models for different tasks within a single workflow

- REST API and webhook output for every application

- Agent mode with tool-calling and iterative reasoning

Best for

- Startups building AI-powered SaaS features that need a full platform, not just an orchestration library

- Operations teams who want a visual builder rather than writing Python for every workflow

- Companies evaluating SaaS MVP development who need a rapid AI backend without building from scratch

Pricing

- Free and open-source under Apache 2.0; self-hosted with no usage fees

- Dify Cloud: free tier with 200 message credits/month; paid plans from $59/month

- Enterprise: custom pricing with SSO, private deployment support, and SLA

Pros

- Platform-in-a-box: most teams do not need additional tools to go from prototype to production

- RAG pipeline is configurable at the chunking and retrieval level without writing indexing code

- Plugin ecosystem growing rapidly, with 100+ connectors added in 2025

Cons

- Visual builder adds abstraction that makes debugging complex workflows slower than reading code

- Self-hosted setup requires Docker and a PostgreSQL database; not trivial for teams without DevOps

- Less suited to highly custom agent logic that does not fit the visual node model

7. RAGFlow, best for enterprise document-heavy knowledge bases

RAGFlow focuses on the hardest part of retrieval-augmented generation in practice: documents are messy. PDFs have inconsistent formatting, tables do not parse cleanly, and most RAG implementations destroy context when they chunk text naively. RAGFlow addresses this with deep-document parsing that understands layout, tables, figures, and structured data, producing chunks that actually preserve meaning rather than slicing mid-sentence.

Its agentic retrieval layer lets you build agents that do not just fetch the top-k chunks but reason about what they need to retrieve, issue multiple queries, and combine results across documents. For enterprise teams with large internal knowledge bases, compliance documents, or technical manuals, this distinction matters significantly. It complements teams building complex RAG pipeline architecture for legal, finance, or healthcare use cases where citation accuracy is non-negotiable.

Key features

- Deep-document parsing: layout-aware chunking for PDFs, Word, Excel, and HTML

- Agentic retrieval with multi-query reasoning and cross-document synthesis

- Grounded citations: every answer links back to the exact source chunk

- Support for 12+ embedding models and vector stores (Elasticsearch, Milvus, Qdrant)

- Web UI for knowledge base management and chat testing

Best for

- Legal, finance, and compliance teams with large repositories of structured and semi-structured documents

- Enterprises where citation accuracy and source attribution are regulatory requirements

- Teams replacing keyword search on internal document stores with AI-native retrieval

Pricing

- Free and open-source under Apache 2.0; self-hosted with no usage fees

- Enterprise: custom pricing with dedicated support, SLA, and private deployment

Pros

- Layout-aware chunking solves the table and figure parsing problem that trips up most RAG implementations

- Grounded citations make answers verifiable and auditable without manual post-processing

- Actively maintained: 70,000+ stars and frequent releases with new model integrations

Cons

- Setup is more complex than simpler RAG tools: requires a running Elasticsearch or Milvus instance

- Focused narrowly on retrieval; not a general-purpose agent framework for multi-step workflows beyond document Q&A

- UI for knowledge base management is functional but not polished for non-technical end users

8. Langflow, best for no-code agent prototyping

Langflow brings a drag-and-drop visual interface to LangChain, letting you build agents, RAG pipelines, and multi-step chains by connecting nodes on a canvas. Every component in the LangChain ecosystem, prompts, models, retrievers, tools, memory stores, is available as a node. When you are satisfied with the visual design, Langflow exports it as a Python script or exposes it as a REST API endpoint.

The practical value is faster iteration: a product manager or ML engineer who is not deep in Python can prototype a complete agent in an afternoon, validate it with real inputs, and hand the exported code to a developer for productionization. For teams doing early-stage AI exploration before committing to custom workflow automation, Langflow reduces the feedback cycle from days to hours.

Key features

- Visual canvas builder with 50+ pre-built LangChain components

- Export to Python code or deploy as REST API directly from the UI

- Multi-agent support with agent-to-agent communication nodes

- Built-in playground for testing inputs and outputs within the canvas

- Hosted on DataStax Astra (managed cloud option) or self-hosted via Docker

Best for

- Product and ML teams prototyping AI features before investing in full engineering effort

- Technical users who want to test LangChain configurations visually before writing production code

- Teams evaluating AI workshops or upskilling programs where visual tooling lowers the learning curve

Pricing

- Free and open-source under MIT license; self-hosted with no usage fees

- DataStax Astra Cloud: pay-as-you-go from $0.15 per credit; free starter tier available

- Enterprise: custom pricing through DataStax

Pros

- Fastest path from idea to testable agent without writing code

- One-click export to Python means visual prototypes can graduate to production code

- 146,000+ GitHub stars reflect a large, active community with plentiful component contributions

Cons

- Visual abstractions hide important configuration details that matter in production (chunking strategy, retrieval parameters, error handling)

- Exported Python code is readable but often needs refactoring for production readiness

- Large complex workflows become difficult to navigate on the canvas as node count grows

How to choose the best open-source AI agent framework

1) Start with your workflow shape, not the framework's star count

Different frameworks are optimized for different execution models. If your workflow is linear (input to agent to output), any framework works. If your workflow branches, loops, waits for external approval, or recovers from partial failures, you need a framework built for that.

- If your workflow is a multi-step sequence with defined roles: start with CrewAI for readable orchestration

- If your workflow branches based on intermediate results or needs human approval gates: use LangGraph for its checkpointing and conditional edge model

- If your workflow is primarily document retrieval with synthesis: RAGFlow is purpose-built for this

- If you need a visual builder with the ability to export to code: Langflow is the fastest path to a working prototype

2) Decide how much of the stack you want to own

Self-hosting gives you data control and eliminates per-token overhead on orchestration, but it shifts infrastructure management to your team. Cloud-managed options trade that control for faster setup and no ops burden.

- If data residency is a hard requirement (regulated industries, sensitive internal data): OpenClaw or Dify self-hosted are the strongest options

- If you want zero infrastructure management: Dify Cloud, LangGraph Cloud (via LangSmith), or Langflow on DataStax give you managed options with free tiers

- If you are already on Azure and want to minimize vendor count: AutoGen with Azure OpenAI Service reduces integration friction

3) Match the framework to your team's technical depth

No-code visual builders accelerate prototyping but introduce abstraction that complicates debugging in production. Code-first frameworks give engineers full control but require deeper Python familiarity.

- For teams with strong Python engineers who value control: LangGraph or CrewAI in code-first mode

- For teams mixing technical and non-technical contributors: Dify or Langflow for the visual layer, with engineers handling edge cases in code

- For research and enterprise Microsoft environments: AutoGen's documentation and Azure alignment reduce the learning curve for teams already in that ecosystem

4) Think about production requirements before you prototype

Many teams pick a framework based on how fast they can get a demo running, then discover it cannot handle their production requirements: multi-tenancy, audit logging, rate limiting, persistent memory across sessions, or compliance reporting. Evaluate these requirements upfront.

- For agents that must cite sources and produce auditable outputs: RAGFlow or LangGraph with persistent state

- For agents that need to run across thousands of concurrent users with cost controls: Dify's model routing and Langflow's API layer both support this, but architecture decisions matter

- For teams that need ongoing support, custom integrations, or want a production-ready agent built and maintained: working with an AI agent development partner can be faster than building the operations layer in-house

If you are evaluating these frameworks and need help architecting a production system, or want a custom agent built around your specific workflows and data, AY Automate's custom workflow automation team specializes in agentic system design and deployment. Book a free discovery call to map out the right stack for your use case.

FAQ

What is an open-source AI agent framework? An open-source AI agent framework is a library or platform that provides the building blocks for creating autonomous AI systems: tool-calling, memory, multi-step reasoning, and orchestration. "Open-source" means the source code is publicly available, you can self-host it, and you are not tied to a proprietary vendor's API pricing. Frameworks like CrewAI, LangGraph, and AutoGen give you these primitives so you can build agents without implementing orchestration logic from scratch.

What is the difference between CrewAI and LangGraph? CrewAI uses a role-based metaphor where agents have assigned roles, goals, and tools, and the framework handles coordination between them. It is easier to get started with and reads naturally for non-engineers. LangGraph models your agent as a directed graph with conditional transitions, loops, and checkpointed state. It gives you more control over execution flow and is better suited to agents with complex branching logic or those that need to pause and resume across sessions. Start with CrewAI for straightforward multi-agent tasks and use LangGraph when your workflow has branches, error recovery paths, or human-in-the-loop gates.

Can I use these frameworks with any LLM, not just OpenAI? Yes. All seven frameworks in this list support multiple model providers. OpenClaw, Dify, and Langflow work with local models via Ollama (Llama, Mistral, Gemma). CrewAI and LangGraph support any OpenAI-compatible API. AutoGen is Azure-native but can be configured for other providers. RAGFlow supports 12+ embedding models for its retrieval layer. None of them require you to use OpenAI.

Is there a free open-source AI agent framework I can use in production? All seven frameworks listed here are free and open-source. Self-hosting them eliminates usage fees entirely. You pay only for the LLM API calls your agents make (or nothing if you run local models). The trade-off is infrastructure management: you need a server, Docker, and in some cases a running database (PostgreSQL, Redis, or a vector store) to operate them at scale. Dify Cloud, LangSmith, and DataStax Astra offer managed cloud versions with free tiers if you want to avoid self-hosting.

Which framework is best for building a customer-facing AI chatbot? Dify is the fastest path to a production customer-facing chatbot: it includes a chat widget you can embed, a RAG pipeline for connecting your knowledge base, and a REST API for custom frontends. OpenClaw is strong if you need the bot connected to messaging apps like WhatsApp or Slack out of the box. For highly customized chatbots with complex multi-step conversation flows, LangGraph's stateful execution model gives you the control you need to handle edge cases reliably.

What is the difference between RAGFlow and a standard RAG implementation? Standard RAG implementations chunk documents by fixed token count, embed those chunks, and retrieve the top-k by similarity. This works for clean, well-formatted text but fails on real enterprise documents with tables, figures, multi-column layouts, and inconsistent formatting. RAGFlow uses layout-aware parsing that understands document structure before chunking, preserving the semantic meaning of tables and figures. Its agentic retrieval layer also issues multiple queries and synthesizes across documents rather than just returning the top-k from a single query. The result is significantly higher answer quality on complex document corpora.

Should I build my own AI agent system or use an existing framework? For most teams, starting with an existing open-source framework is the right move. Building a production-grade agent orchestration layer from scratch (tool-calling, memory, error recovery, checkpointing, observability) takes months of engineering. Frameworks like CrewAI or LangGraph give you those primitives in days. The case for custom-built systems is narrow: you have highly specialized orchestration requirements that existing frameworks cannot accommodate, or you need performance and cost characteristics that require low-level control. An AI strategy consulting engagement can help you make that call before committing engineering resources.

Which AI agent framework is best for enterprise-scale workloads? AutoGen (with Azure OpenAI Service) and Dify Enterprise are the strongest choices for enterprise-scale deployments with SSO, audit logging, and SLA requirements. LangGraph with LangSmith provides enterprise-grade observability and tracing for teams that need production monitoring on complex agent workflows. For heavily regulated industries where all data must stay on-premise, OpenClaw and RAGFlow self-hosted are the most defensible options. If you need help designing and deploying an automation maintenance support layer around whichever framework you choose, an experienced team can reduce time to production significantly.

Is OpenClaw production-ready? OpenClaw is open-source and self-hostable, and many teams are running it in internal production environments. Its integration library for messaging apps and productivity tools is well-maintained. However, the managed cloud offering is still in development, and some community-contributed connectors have inconsistent quality. For mission-critical deployments, plan to audit the specific integrations you rely on and allocate engineering time for maintenance as the project evolves. The foundation governance structure (post-creator joining OpenAI) is a positive signal for long-term stability.

Can these frameworks be combined with n8n or Make for workflow automation? Yes. LangGraph, CrewAI, and Dify all expose REST API endpoints that n8n and Make can call as workflow steps. A common pattern is to use n8n for trigger-based event handling and routing, then call an AI agent endpoint for the intelligence layer, and return results to n8n for downstream actions. This hybrid approach lets teams use the best tool for each part of the workflow without rewriting existing automation infrastructure. For teams building this kind of hybrid stack, custom n8n automation specialists can design the integration architecture.

Taha builds and ships custom AI agents and workflow automations for AY Automate clients across SaaS, finance, and professional services.