On March 31, 2026, at 4:23 am ET, a security researcher named Chaofan Shou posted a message on X that would reverberate through the entire developer community: Anthropic had accidentally shipped the full source code of Claude Code inside a public npm package.

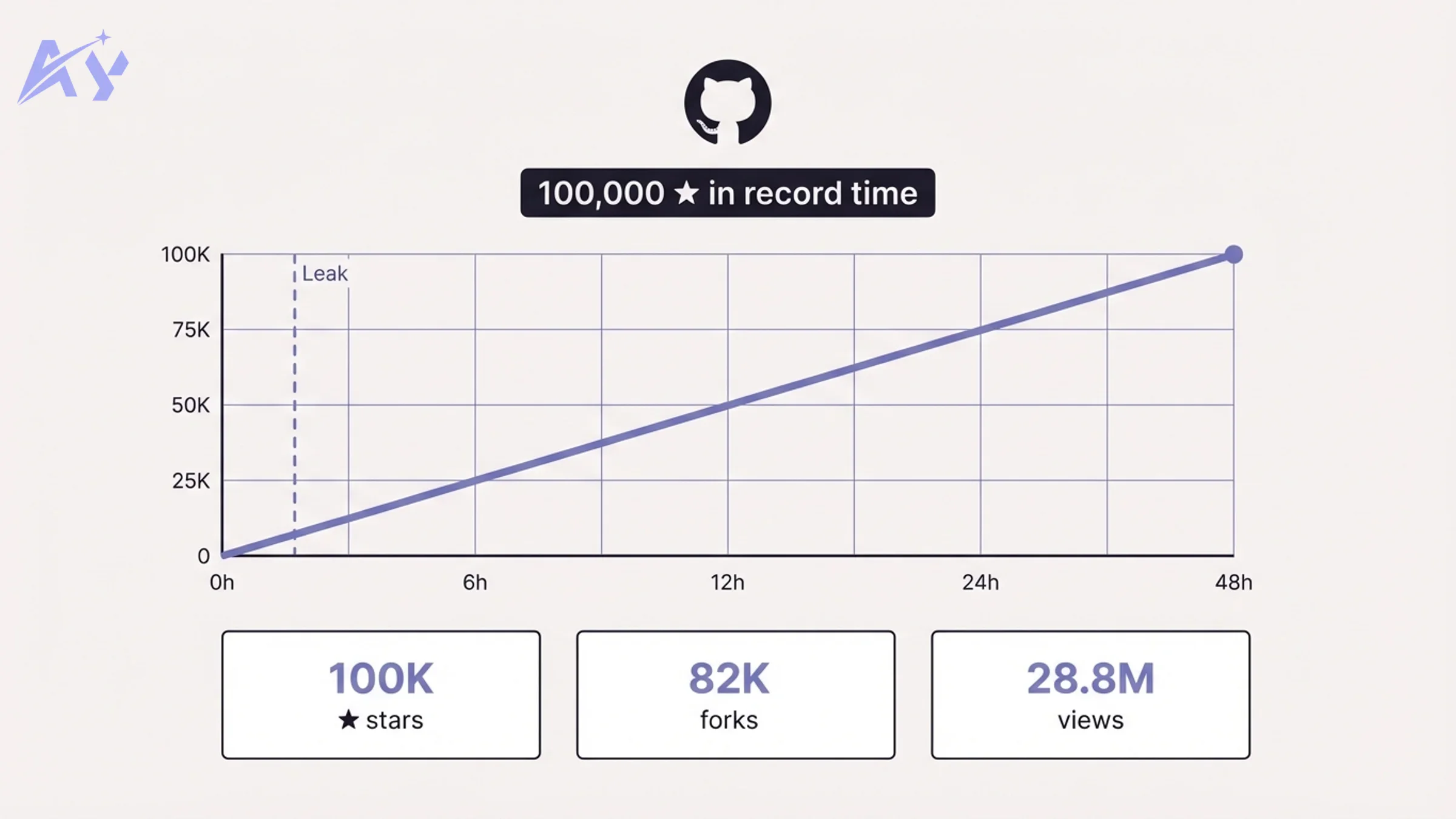

The cause was a single missing line in a config file. The fallout was anything but simple. Within hours the code had been mirrored, dissected, forked, rewritten in Python and Rust, and studied by tens of thousands of engineers. The X post alone surpassed 28.8 million views. The GitHub mirror became the fastest-growing repository in the platform's history. And buried inside 512,000 lines of TypeScript were features, codenames, and architectural decisions that Anthropic had never publicly disclosed.

This is the full story: what happened, why it happened, what was inside, and what it means for every team building with AI.

The Incident: How 512,000 Lines Escaped

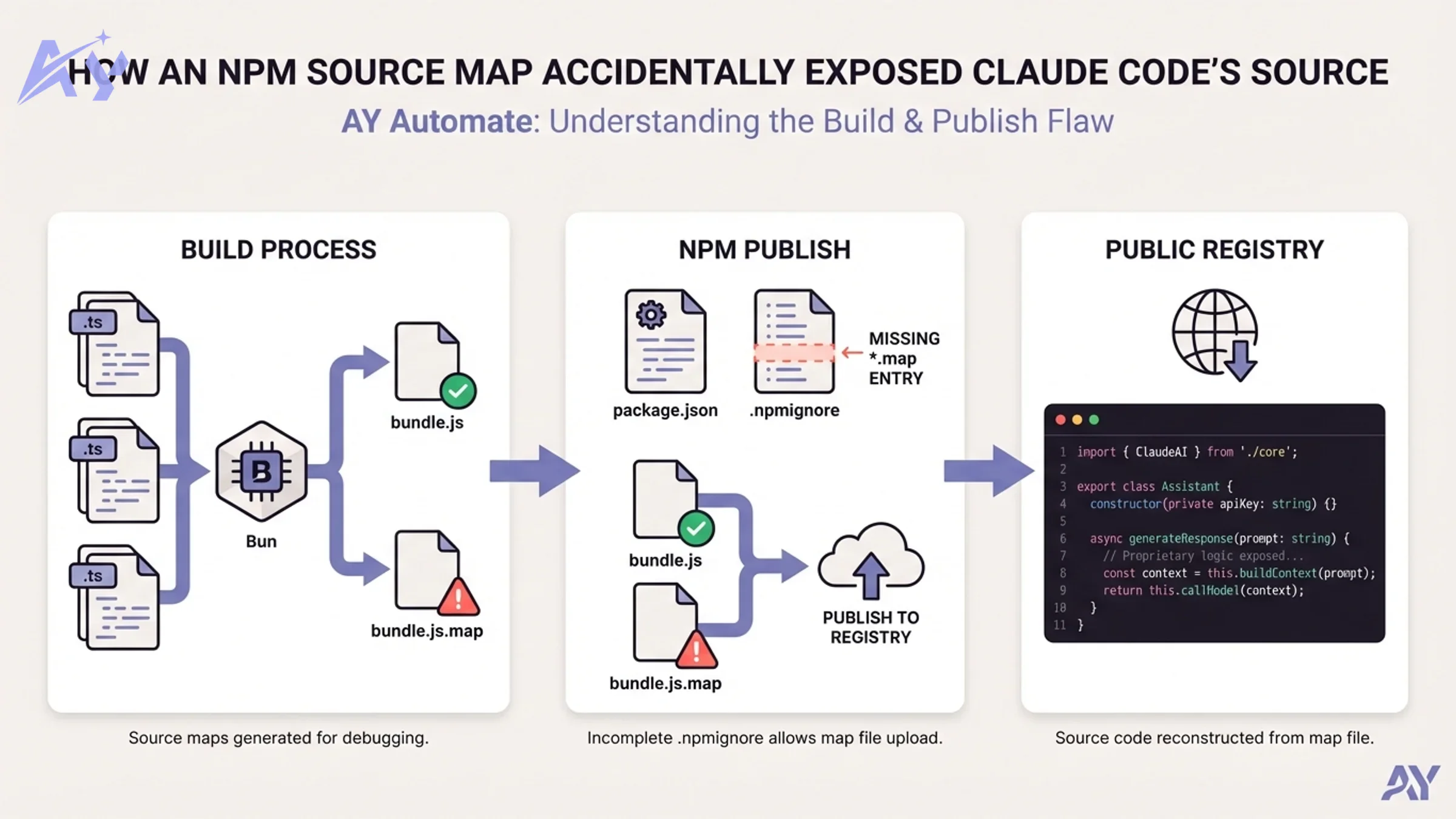

The root cause is mundane enough to be painful: a missing .npmignore entry.

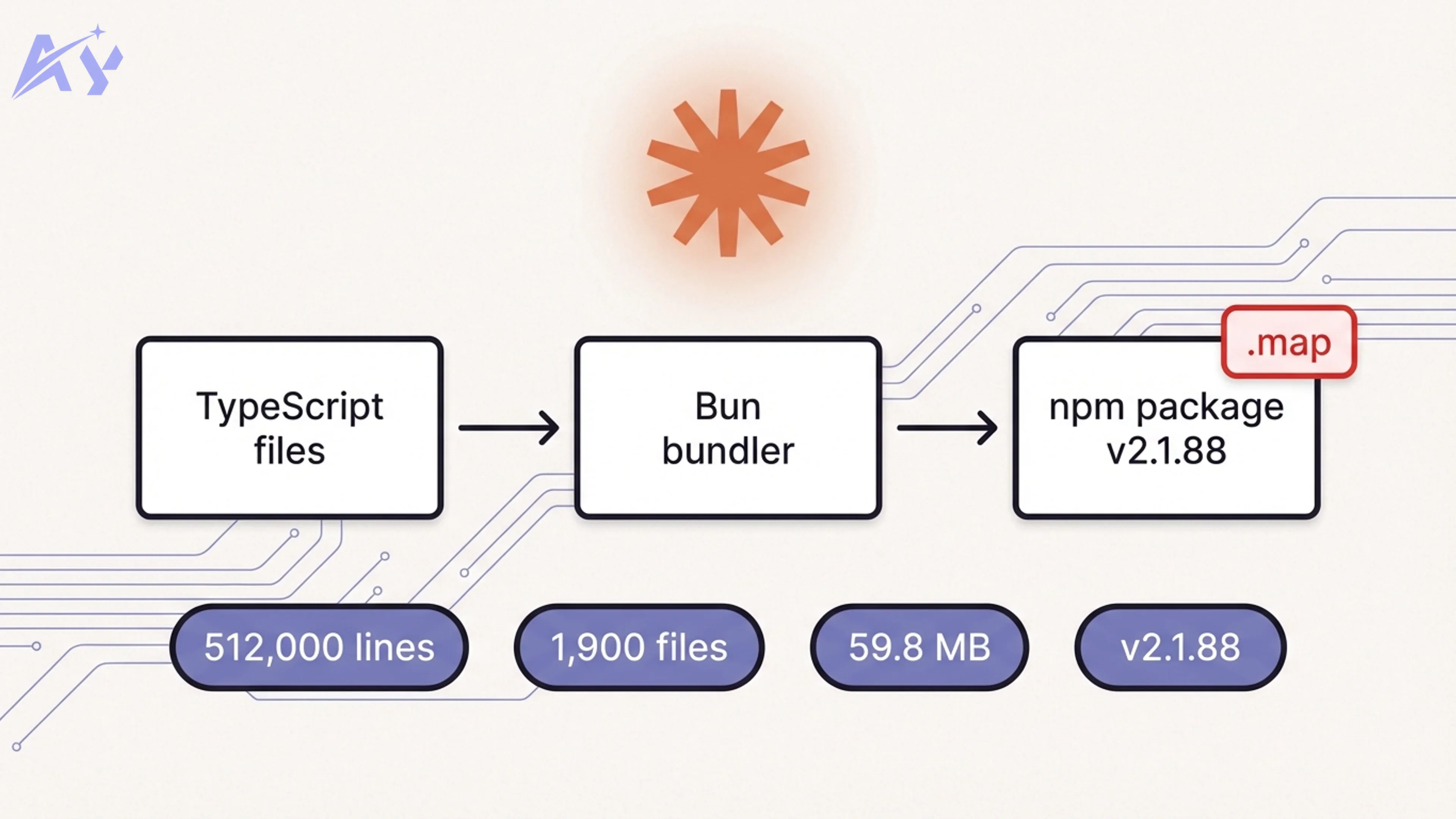

Claude Code is built with Bun, Anthropic's JavaScript runtime (which Anthropic acquired in late 2025). Bun generates source map files by default during the build process. Source maps are .map files that map minified, compiled output back to the original source code — they exist purely for debugging and should never ship to production.

When the team published version 2.1.88 of @anthropic-ai/claude-code to the npm registry, no one had added *.map to the project's .npmignore, and the package.json files field had not been configured to whitelist only production artifacts. The 59.8 MB source map slipped through.

Claude Code's head Boris Cherny later confirmed the specifics: "There was a manual deploy step that should have been better automated." Anthropic's official statement: "No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again."

| Fact | Detail |

|---|---|

| Package | @anthropic-ai/claude-code v2.1.88 |

| Leaked file | 59.8 MB JavaScript source map |

| Lines exposed | 512,000+ TypeScript |

| Files | ~1,900 files |

| Root cause | Missing *.map in .npmignore, no files allowlist in package.json |

| Bundler | Bun (source maps on by default) |

| Discovered by | Chaofan Shou (Solayer Labs intern), March 31 2026, 4:23 am ET |

| Anthropic response | Package yanked; confirmed human error, no credentials exposed |

The fix, in hindsight, is one line: *.map in .npmignore. Any team shipping npm packages should audit their .npmignore and package.json files field before every release. This is not a novel vulnerability; it is a routine gap that most teams never check.

Bad Timing: The Second Leak in a Week

The Claude Code source map incident was actually Anthropic's second accidental disclosure in days.

Just before the npm package leak, Fortune reported that Anthropic had inadvertently made close to 3,000 internal files publicly accessible, including a draft blog post detailing a powerful upcoming model. That draft described the model — internally codenamed Mythos and also referred to as Capybara — and called it a system that "presents unprecedented cybersecurity risks." The document was never meant to go public.

Two major accidental disclosures in less than a week from a company that markets itself as the safety-first AI lab prompted sharp commentary across the developer community, and within days drew attention from US Congress: Representative Josh Gottheimer formally pressed Anthropic on the leaks and the company's operational security protocols.

Bloomberg's reporting characterized Anthropic's internal response as scrambling to address the fallout. The company's credibility on process discipline, not just model safety, was under scrutiny.

The GitHub Mirror: Fastest-Growing Repo in History

Within hours of Shou's X post, developers began archiving the source. The most significant mirror, instructkr/claw-code by Sigrid Jin, avoided direct replication of the leaked TypeScript and instead rebuilt the Claude Code runtime from scratch as an independently authored clean-room rewrite, first in Python and then in Rust. This approach reduced legal risk while preserving the architectural insights.

The repository crossed 100,000 GitHub stars, making it the fastest repository in GitHub's history to reach that threshold. For context: it surpassed the previous fastest-growing repo record by a significant margin. A second project, Kuberwastaken/claurst, also gained traction with a focused Rust port and a detailed technical breakdown of the leaked code's internals.

The wider developer response highlighted something important: there is enormous pent-up demand for an open, auditable AI coding agent. The mirror did not grow because developers wanted stolen property. It grew because the community has wanted visibility into how these tools actually work at production scale, and this was the first time they got it.

If your team is evaluating open-source AI coding agents as an alternative or complement to Claude Code, our AI agent development practice helps design and deploy production-grade coding agents tailored to your stack and security requirements.

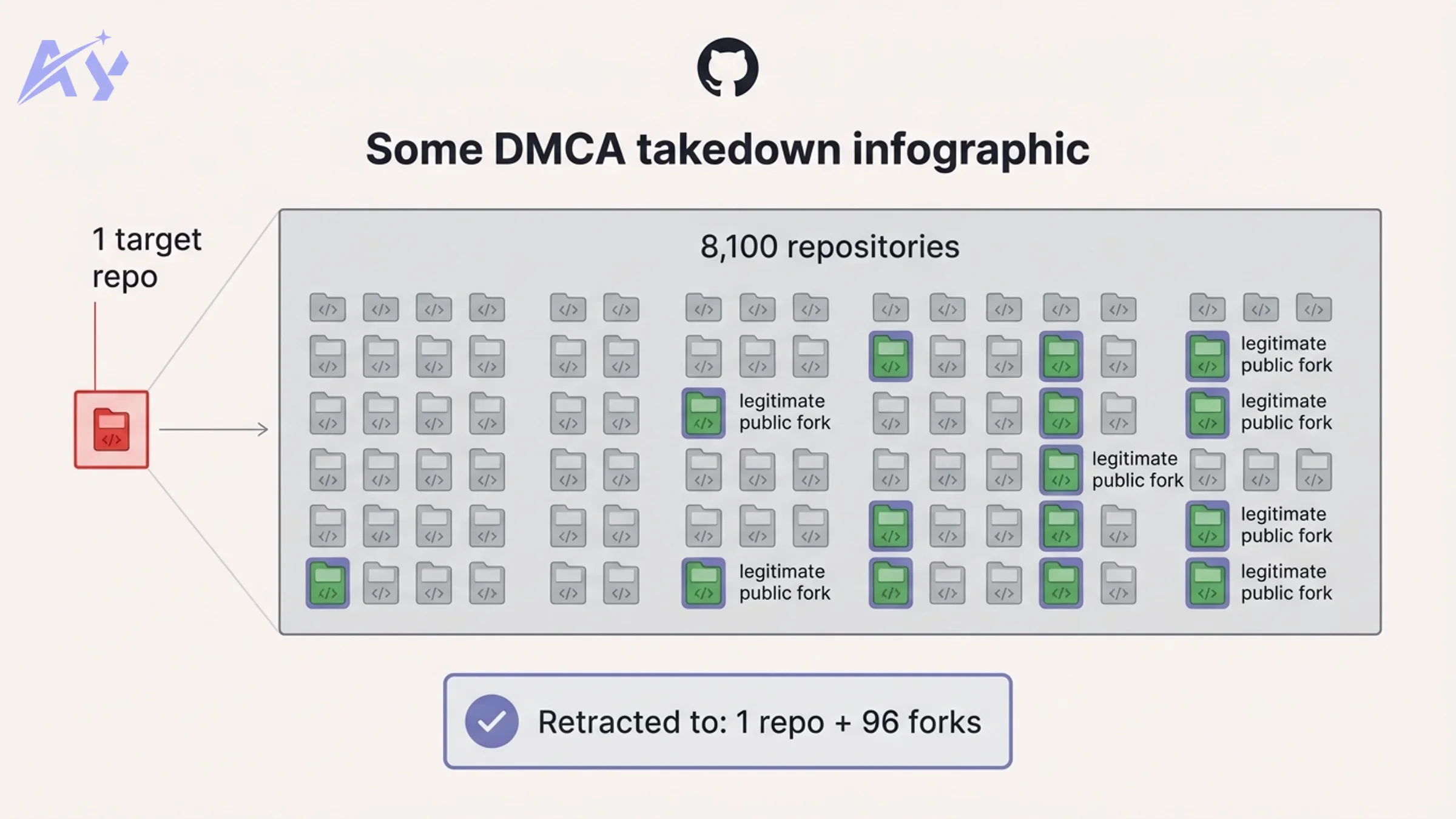

The DMCA Overreach: 8,100 Repos Taken Down by Mistake

Anthropic's attempt to contain the leak made headlines for the wrong reasons.

The company issued a DMCA takedown notice to GitHub targeting repositories containing the leaked source code. According to GitHub's public DMCA records, the notice was executed against approximately 8,100 repositories — a dramatically overinclusive action. The problem: the takedown notice targeted a fork network connected to Anthropic's own public Claude Code repository, which meant it swept up legitimate forks containing only skills, examples, and documentation that had nothing to do with the leaked source map.

Developers started posting GitHub notification emails on social media showing their public-repo forks being taken down. Boris Cherny confirmed the overreach was "accidental" and retracted the bulk of the notices, limiting the final takedown to one repository and 96 directly related forks. The episode added a second wave of community frustration on top of the original leak.

The DMCA incident underlines a practical reality: once a source map is cached across npm CDNs and archived by developers worldwide, a targeted takedown is extremely difficult to execute cleanly. The original leaked archive remained accessible through multiple channels throughout.

The Architecture Revelation: The Moat Is in the Harness

Perhaps the most significant insight from the leak had nothing to do with any specific feature. It was architectural.

The community assumed Claude Code's value came primarily from the underlying Claude model. The leaked source told a different story. Five hundred thousand lines of TypeScript revealed a sophisticated harness — a software layer that sits around the LLM and governs everything: how it uses tools, how it maintains context, how it navigates multi-step workflows, and how its behavior is constrained and shaped at runtime.

As one widely shared analysis put it: "The moat in AI coding tools is not the model. It is the harness."

The harness handles:

- Tool orchestration: Structured definitions for dozens of tool integrations, with schemas, retry logic, and error handling built in

- Context management: Sophisticated compression and memory strategies for maintaining useful context across long sessions

- Behavioral guardrails: Programmatic constraints that operate independently of the model's own alignment training

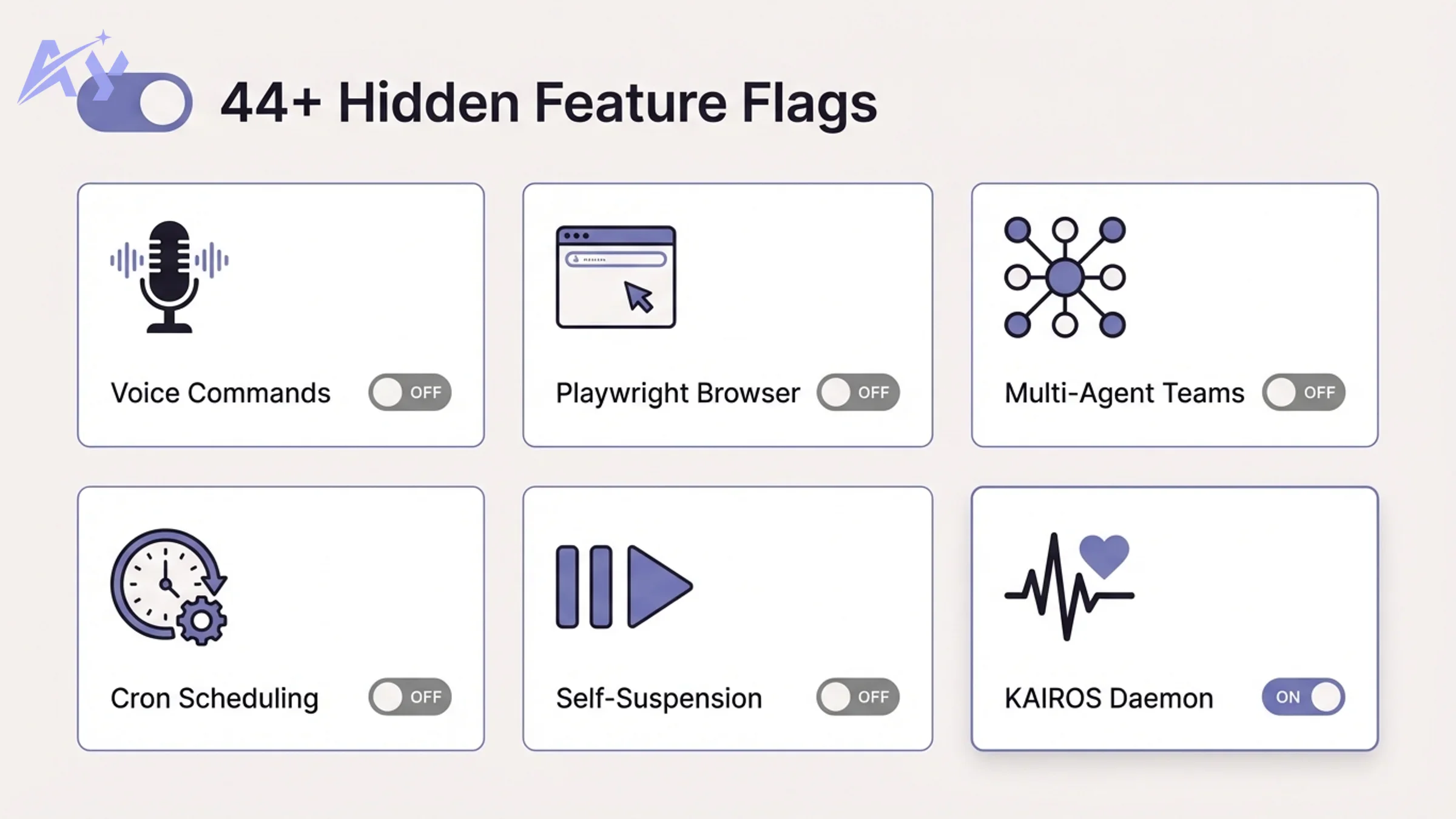

- Feature flag infrastructure: A mature gating system for 44+ unreleased capabilities — the kind of system you build when you have a long product roadmap and a safety review process

This matters for every team building AI agents. The raw LLM capability is only one input. The architecture around it — how you structure prompts, manage state, handle errors, and control behavior — determines whether you get a useful product or an expensive toy. Our work in custom workflow automation and RAG pipeline architecture is grounded in exactly this principle: the harness architecture is the product.

For teams building their first AI agent, our AI strategy consulting practice helps design the right harness architecture before writing a single line of code.

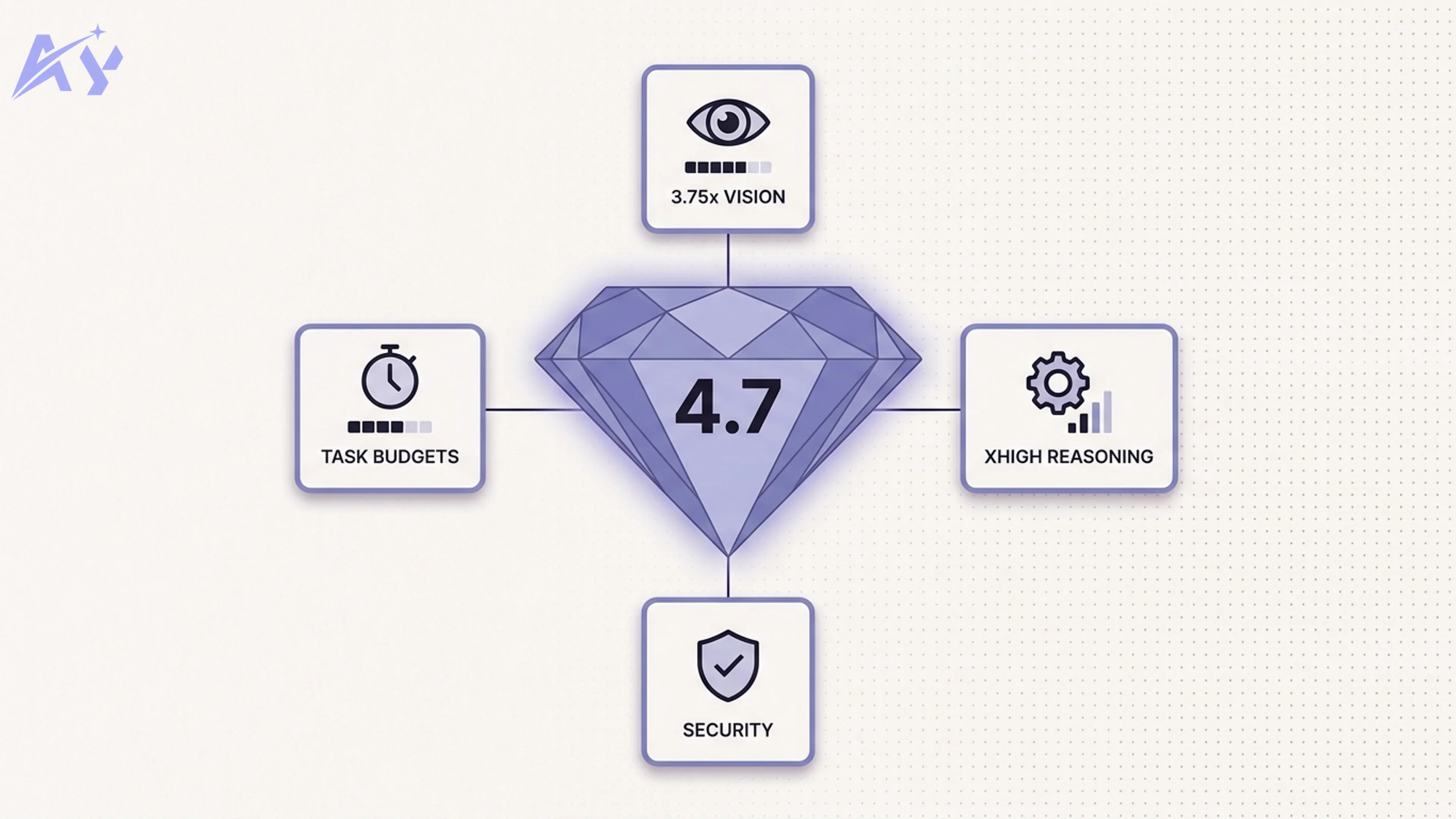

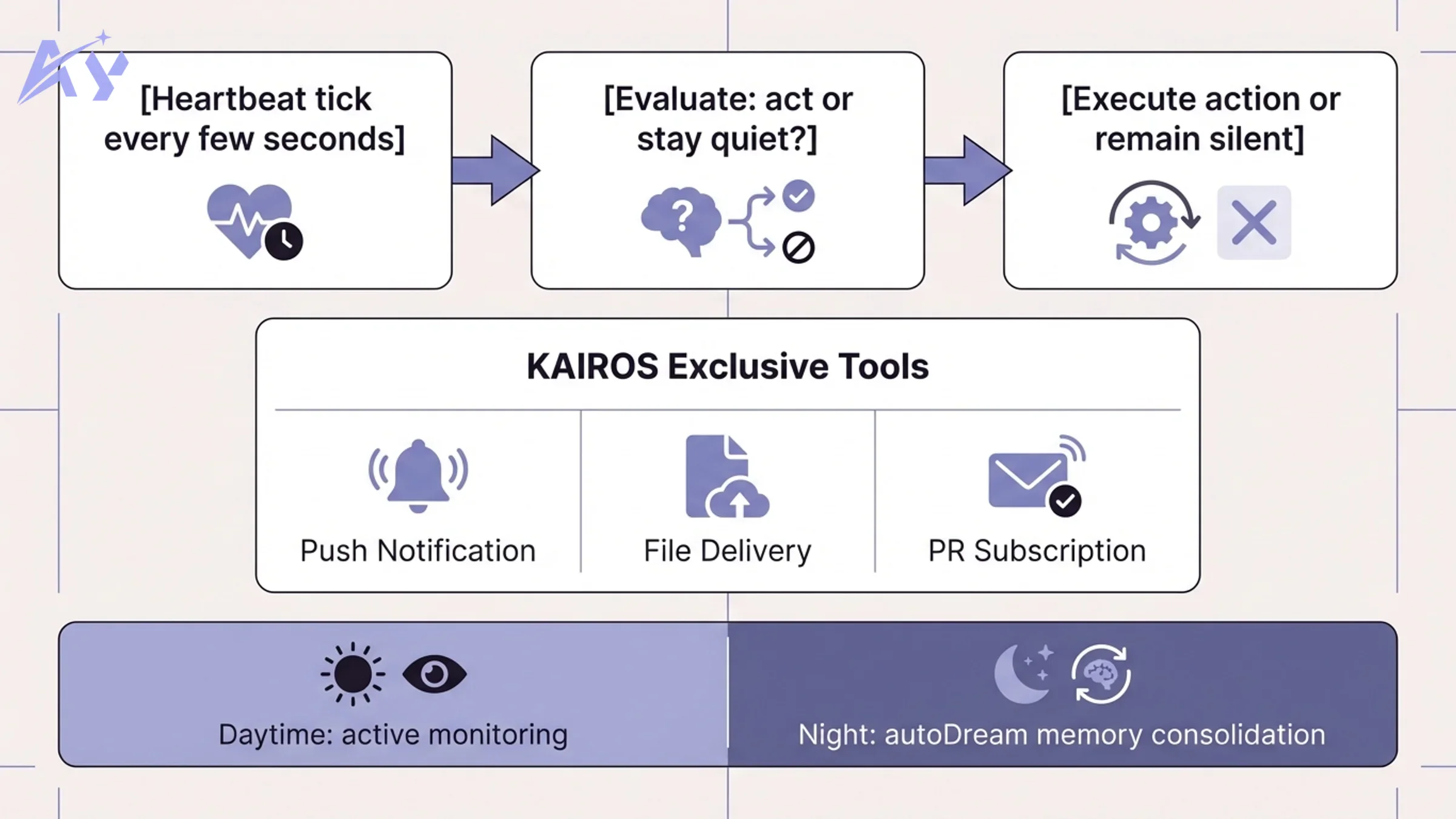

KAIROS: The Autonomous Agent Mode Nobody Knew About

The most discussed discovery in the entire leak was KAIROS — an unreleased autonomous daemon mode hidden behind two feature flags named PROACTIVE and KAIROS, referenced over 150 times throughout the codebase.

KAIROS is named after the Ancient Greek concept of "the right moment" — and the implementation reflects that framing precisely. Rather than waiting for a user to type a prompt, KAIROS operates as a persistent background process:

- Every few seconds it receives a heartbeat tick prompt: "anything worth doing right now?"

- It evaluates the current state of your codebase, open issues, recent errors, and incoming GitHub webhooks

- It decides autonomously: act, or stay quiet

- A 15-second blocking budget ensures proactive actions never disrupt the developer's active flow for longer than a brief pause

- When it acts, it has access to all standard Claude Code tools: fix bugs, update files, send responses, run tasks, make commits

At night, KAIROS runs a process the code calls autoDream: a memory consolidation cycle where the agent merges disparate observations from the day, removes logical contradictions between stored facts, and converts vague insights into concrete, queryable knowledge. This is autonomous memory management running outside any user session.

KAIROS Exclusive Tools

KAIROS has three tools that regular Claude Code does not:

| Tool | What it does |

|---|---|

| Push notifications | Reaches you on phone or desktop even when the terminal is closed |

| File delivery | Sends you files it created without being asked |

| PR subscriptions | Monitors GitHub repositories and reacts to incoming pull requests |

The feature is fully implemented. The 150+ code references are not prototype scaffolding. Anthropic has simply not flipped the switch — presumably pending product, legal, and safety review of an agent that takes unilateral action on your codebase 24/7.

This is where AI coding tools are heading. Teams that want to understand what autonomous agent architecture looks like in production, and how to build something equivalent for their own stack, can explore our AI agent development service for a guided implementation path.

44 Hidden Feature Flags and 20+ Unshipped Features

KAIROS was the headline, but researchers cataloged an extensive roadmap hidden behind feature flags throughout the codebase.

Coordinator Mode — Multi-Agent Orchestration:

The real multi-agent feature is called Coordinator Mode (CLAUDE_CODE_COORDINATOR_MODE=1). One Claude instance acts as a manager, breaking tasks into pieces and routing them to parallel worker agents via a mailbox system. Each worker handles its own subtask independently; the coordinator reconciles results. This is less like multi-threading and more like a small team of agents working in parallel with shared coordination.

ULTRAPLAN — Remote 30-Minute Planning:

One of the most significant unreleased features. For complex tasks, Claude spawns a separate cloud instance running Opus 4.6 and gives it up to 30 minutes to explore and plan. The user can approve the result from their phone or browser. A sentinel value (__ULTRAPLAN_TELEPORT_LOCAL__) brings the approved plan back to the local terminal to execute. Triggered by the /ultraplan hidden slash command.

BUDDY — Terminal Pet Companion: Correctly identified — BUDDY is not a multi-agent system. It is a terminal pet companion: every user gets a unique virtual pet appearing next to their prompt, generated deterministically from their account ID. 18 species are encoded in the source (including capybara). Each pet tracks stats like DEBUGGING, PATIENCE, and CHAOS. An April 1-7 teaser was planned in the code comments, with a full May 2026 launch target.

Voice command mode: A complete voice interface is implemented in the codebase but not yet publicly available. It integrates with the system microphone and translates voice input into Claude Code commands in real time.

Browser automation via Playwright: Native Playwright integration enables browser control tasks directly from Claude Code — not just terminal and file operations.

Daemon Mode:

Background session management: run Claude sessions in the background with claude --bg <prompt> and manage them with daemon ps, logs, attach, and kill commands — similar to how you manage Docker containers.

| Feature | Status | Notes |

|---|---|---|

| KAIROS | Hidden (flags: PROACTIVE, KAIROS) | Always-on daemon, 150+ references |

| Coordinator Mode | Hidden (env: COORDINATOR_MODE=1) | Multi-agent orchestration via mailbox |

| ULTRAPLAN | Hidden (slash: /ultraplan) | 30-min Opus cloud planning session |

| BUDDY | Hidden (teaser Apr 1-7) | Terminal pet, 18 species, May launch |

| Daemon Mode | Hidden (flag: DAEMON, BG_SESSIONS) | Background session management |

| Voice commands | Hidden (flag: VOICE_MODE) | Complete voice interface built |

| Playwright browser | Hidden (flag: WEB_BROWSER) | Native browser control |

| autoDream memory | Part of KAIROS | Nightly memory consolidation |

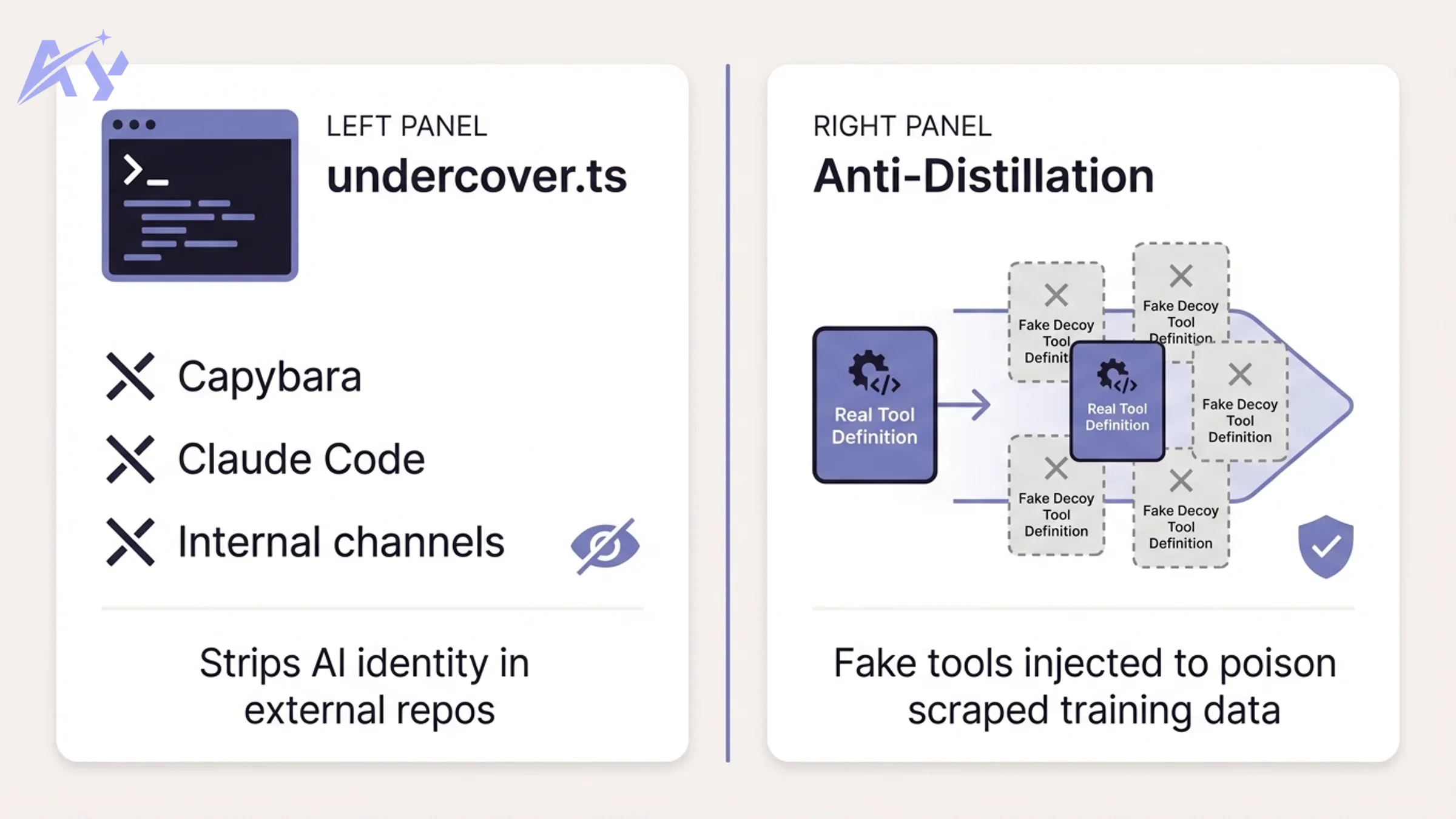

undercover.ts and Anti-Distillation: Two Controversial Discoveries

Two specific files generated particularly sharp community debate.

undercover.ts

undercover.ts is approximately 90 lines of code implementing a mode that strips all Anthropic-identifying information when Claude Code is used in non-internal repositories. When activated, the model is instructed never to mention:

- Internal codenames like "Capybara," "Tengu," or "Numbat"

- Internal Slack channels or repository names

- The phrase "Claude Code" itself

The practical implication: commits, pull requests, and code reviews authored by Anthropic employees using Claude Code in external open-source projects will contain no indication that an AI wrote them or that Anthropic's internal tooling was involved. The community debated whether this constitutes reasonable product testing in partner environments or a transparency concern — particularly in contexts where AI-generated contributions to open-source projects are a live policy discussion.

ANTI_DISTILLATION_CC: Fake Tool Injection

In claude.ts, a flag called ANTI_DISTILLATION_CC was found. When enabled, Claude Code sends anti_distillation: ['fake_tools'] in its API requests to Anthropic's servers. This instructs the backend to silently inject decoy tool definitions into the system prompt — fake tools that look real but do nothing.

The purpose: if a competitor or researcher is intercepting Claude Code's API traffic to train a rival model on Claude Code's tool use patterns, the fake tools pollute that training dataset. As one Hacker News commenter noted, "An LLM company using regexes and fake tool injection for competitive defense is either peak irony or peak pragmatism, depending on your perspective."

Frustration Regexes: Claude Is Watching Your Swear Words

A file called userPromptKeywords.ts contained a regex pattern that detects user frustration in real time, matching expressions like "wtf," "wth," "ffs," "omfg," and a range of profanities.

The existence of the frustration detector generated significant discussion, partly because of the irony: an LLM company using regex pattern matching for sentiment analysis. The pragmatic justification is obvious — a regex is orders of magnitude faster and cheaper than an LLM inference call, and you only need blunt frustration detection, not nuanced sentiment analysis.

What the code does with these signals is not disclosed. The most likely use is telemetry: a spike in detected frustration expressions across a user cohort is a low-cost early warning system for a broken feature or a model regression. If Anthropic ships a new Claude version and frustration-keyword rates jump, that is a signal worth investigating immediately.

The frustration detector also surfaces a broader principle about AI product engineering: you layer simple, cheap detection mechanisms underneath expensive AI reasoning. You do not use a sledgehammer where a nail-check will do.

Internal Model Codenames Exposed

The leaked codebase confirmed several internal model codenames that had previously been rumored or partially disclosed through other means.

| Codename | Also known as | Status | Notable detail |

|---|---|---|---|

| Capybara | Mythos | Active (v8 internally) | 1M token context, "fast mode" |

| Fennec | Opus 4.6 | Public | Publicly released model |

| Numbat | — | Unreleased | Next-generation, still in testing |

| Tengu | — | Internal | Active internal variant |

Capybara / Mythos was on internal version 8 at the time of the leak, featuring a 1 million token context window and a documented "fast mode." The same model had been partially exposed days earlier through the draft blog post leak that triggered the initial news cycle about Anthropic's operational security.

One of the more candid findings: internal engineering notes acknowledged a 29-30% false claims rate in Capybara v8, a regression from the 16.7% rate measured in v4. This is the kind of honest self-assessment that internal teams produce but that almost never becomes public. The fact that it was in the codebase — rather than a separate internal document — suggests it was tied directly to feature flag logic or model routing decisions.

What This Means for Developers and AI Teams

The Claude Code source map leak is primarily not a security story. No credentials leaked, no model weights were exposed, and the packaging error was a routine human mistake that Anthropic fixed quickly. The story is about what was inside, and what that reveals about the near-term trajectory of AI coding tools.

Key takeaways for engineering and operations leaders:

-

Always-on AI agents are production-ready internally. KAIROS is a finished system. Autonomous coding agents that monitor your codebase, fix bugs overnight, and push notifications to your phone are not science fiction — they are waiting for a product launch decision. Your team should be thinking now about governance, approval workflows, and rollback procedures for agents that take unilateral action.

-

The harness architecture is the product. If you are building AI tooling, the model is a commodity input. The value you create lives in the software layer that structures how the model operates. Invest in harness design, not just prompt engineering.

-

Audit your npm publish pipeline today. Add

*.mapto.npmignore. Set an explicitfilesallowlist inpackage.json. Enable automated checks that verify no source maps or debug artifacts are included before any publish. This is a 30-minute fix that prevents a 30-day PR crisis. -

Build eval pipelines before autonomous agents. The internal acknowledgment of a 29-30% false claims regression is a reminder that model behavior changes in ways you cannot predict. Before giving an agent autonomous write access to your codebase, you need systematic evaluation frameworks — not vibes-based assessments.

-

Open-source AI coding agents are coming. The 100K-star response to claw-code shows the demand. Community-built, auditable alternatives to proprietary coding agents will mature quickly now that the architectural template is public. Factor this into your vendor evaluation.

For teams ready to move from reactive AI chat tools to persistent, event-driven agents, our AI strategy consulting and corporate AI training programs help engineering and operations teams design that transition with appropriate governance and evaluation frameworks.

For hands-on agent implementation, our AI workshops cover agent architecture, harness design, and eval pipeline setup in an intensive format. If you need help staffing an AI engineering team capable of building production-grade agent infrastructure, our AI staff augmentation practice places experienced engineers quickly.

Sources: The Register, VentureBeat, Layer5 Blog, Cybernews, Alex Kim's Blog, The New Stack, TechCrunch, Fortune, IT Pro, DEV Community, Engineers Codex, Bloomberg, Axios

FAQ

What is the Claude Code source code leak?

On March 31, 2026, Anthropic accidentally published the full TypeScript source code of Claude Code inside the @anthropic-ai/claude-code npm package (version 2.1.88). A 59.8 MB JavaScript source map file was included due to a missing *.map entry in .npmignore. The map file contained 512,000 lines of source code across approximately 1,900 files. Anthropic confirmed it was human error and no customer data was exposed.

Where can I find the Claude Code leaked source code GitHub repository in 2026?

The original leaked source was pulled from npm, but multiple GitHub mirrors appeared within hours. The most notable is instructkr/claw-code, a clean-room Rust rewrite that became the fastest-growing repository in GitHub history, surpassing 100,000 stars. Kuberwastaken/claurst is another Rust port with a detailed technical breakdown. Directly hosting or using the original leaked TypeScript source may expose you to legal risk under Anthropic's copyright. The clean-room rewrites have different legal standing.

What is a source map leak and how do I prevent one?

A JavaScript source map is a debug file that maps minified compiled output back to the original source. When published in an npm package by mistake, it makes the original source readable by anyone. To prevent this: add *.map to your .npmignore file, configure the files field in package.json to explicitly allowlist only production artifacts, and disable source map generation in your bundler for production builds (in Bun: set sourcemap: false in your build config).

What is KAIROS in the Claude Code source?

KAIROS is an unreleased autonomous daemon mode discovered in the leaked codebase, referenced 150+ times and hidden behind feature flags named PROACTIVE and KAIROS. It enables Claude Code to operate as a 24/7 background agent: receiving heartbeat prompts every few seconds, autonomously deciding whether to act on your codebase, fixing bugs, monitoring GitHub webhooks, and running memory consolidation (autoDream) overnight. It has exclusive access to push notifications, file delivery, and PR subscription tools. The feature is fully built but not yet publicly released.

What is the autoDream feature in the Claude Code leak?

autoDream is a nightly memory consolidation process that runs as part of the KAIROS autonomous agent mode. After a day of active agent sessions, it merges disparate observations from the day into a coherent memory structure, removes logical contradictions, and converts vague insights into concrete queryable facts. It operates autonomously without user initiation and represents a form of persistent long-term memory for the agent across sessions.

What was found in undercover.ts in the leaked source?

undercover.ts (approximately 90 lines) implements a mode that strips all Anthropic-identifying information when Claude Code operates in non-internal repositories. When activated, the model never mentions internal codenames, Slack channels, or even the phrase "Claude Code" itself. This means AI-authored contributions to open-source projects from Anthropic employees carry no AI attribution. The community debate centered on whether this constitutes reasonable stealth product testing or raises transparency concerns.

What is the ANTI_DISTILLATION_CC flag in Claude Code?

Found in claude.ts, this flag instructs Anthropic's servers to inject decoy ("fake") tool definitions into Claude Code's system prompt when enabled. The purpose is competitive defense: if a third party is intercepting Claude Code's API traffic to train a rival model on its tool use patterns, the fake tools pollute that training dataset and reduce its value. This kind of anti-distillation mechanism is unusual but not unprecedented in competitive AI product engineering.

What are the internal Claude model codenames revealed in the leak? The source referenced: Capybara (also called Mythos, a Claude 4.6 variant on internal version 8 with 1M context and a fast mode), Fennec (Opus 4.6, publicly released), Numbat (an unreleased next-generation model still in testing), and Tengu (an internal active variant). The Capybara/Mythos codename had also been partially disclosed days earlier through a separate leaked draft blog post.

What happened with Anthropic's DMCA takedown of GitHub repositories? Anthropic issued a DMCA notice targeting repositories containing the leaked source. Due to the notice referencing a fork network connected to Anthropic's own public Claude Code repository, it was executed against approximately 8,100 repositories — including many legitimate public forks with no leaked content. Anthropic's Boris Cherny confirmed this was accidental and retracted the bulk of the takedowns, limiting the final scope to 1 repository and 96 directly connected forks.

How can my team prepare for always-on AI coding agents? Start with governance design before tooling decisions. Define which actions an autonomous agent is allowed to take without approval (read-only analysis vs. file modifications vs. commits vs. pushes). Build evaluation pipelines that measure agent accuracy on your specific codebase before granting autonomous write access. Start with supervised autonomy — agent proposes, human approves — and expand the autonomy envelope incrementally as trust is established. Our AI strategy consulting practice helps teams design this adoption roadmap based on their current technical maturity and risk tolerance.

Walid founded AY Automate to help businesses ship AI workflows that actually move revenue. He leads strategy and oversees every client engagement end-to-end.

Full Bio →