Claude Mythos: The AI Model Anthropic Says Is Too Dangerous to Release (And What It Found)

On March 26, 2026, a configuration error at Anthropic's CMS accidentally exposed roughly 3,000 unpublished blog assets to the public internet. Within hours, screenshots of something called "Capybara" flooded X and HackerNews. Threads broke open, speculation ran hot, and Anthropic's biggest secret was out — not from a whistleblower, not from a competitor, but from a CMS misconfiguration that exposed draft content meant for an internal release. They had 12 days to decide what to do about it. That model is now called Claude Mythos Preview. Anthropic's official position: it is too powerful to release to the general public. This is what it does, what it found, who can access it, and what it means for the future of AI.

What Is Claude Mythos?

Claude Mythos is not an incremental upgrade to Claude Opus 4.7. It represents a new model class: Anthropic's most capable system to date, optimized specifically around complex reasoning, software engineering, and cybersecurity tasks.

Internally codenamed "Capybara" during development, Mythos was announced officially on April 7, 2026 — 12 days after the CMS leak forced Anthropic's hand. The model is not broadly available. It exists as a gated research preview, accessible only through invitation-based channels on AWS Bedrock and Google Vertex AI.

The key differentiator is capability in offensive security. Claude Mythos crosses a threshold that no prior AI model has reached in practice: the ability to autonomously discover, chain, and exploit software vulnerabilities at a level that rivals or exceeds expert human security researchers working in teams. That capability is precisely why Anthropic has kept it behind closed doors.

For teams exploring agentic AI capabilities more broadly, AI agent development is now a live conversation at organizations of every size — but Mythos represents the far edge of what those agents can do unsupervised.

The Data Leak That Revealed It: The Capybara Story

It started quietly. On the morning of March 26, 2026, a misconfigured CMS at Anthropic made a batch of unpublished blog assets publicly accessible. The window was brief, but the internet moves fast.

Screenshots began appearing on X within the hour. "Capybara" was the name that showed up in filenames, metadata, and draft page titles. The posts described a model that could find zero-day vulnerabilities across major operating systems and browsers, patch decade-old bugs autonomously, and chain multiple exploits overnight.

The reaction split almost immediately into two camps.

On social media, the framing was apocalyptic. "AI doomsday" trended. Threads declared that an AI capable of finding every OS vulnerability in existence was a weapon, not a product. BNN Bloomberg ran a story within 48 hours headlined "Anthropic's new AI model sparks fear in banks," citing unnamed sources at financial institutions reportedly alarmed by the implications.

On HackerNews, the tone was more skeptical. Top comments questioned whether this was genuine capability or sophisticated pre-launch marketing. The timing — with OpenAI having just shipped GPT-5.4 publicly — was noted repeatedly. "Convenient that their 'too dangerous to release' model benchmarks above GPT-5.4 on every metric they choose to publish," wrote one commenter with several hundred upvotes.

Anthropic said nothing for 12 days. On April 7, they published the official announcement, acknowledged "Capybara" as the internal codename, and introduced the model as Claude Mythos Preview. They did not address the leak directly. They confirmed the cybersecurity capabilities. They announced Project Glasswing. And they stated clearly that Mythos would not be publicly available.

The debate about whether this is genuine safety leadership or strategic positioning remains unresolved. Both readings are coherent. The capabilities are real; the benchmarks, while Anthropic-reported and not independently verified, are consistent with what the leaked draft content described. Whether the refusal to release is driven by safety principles, competitive strategy, or both is something the company has not clarified.

Project Glasswing: Why Every Major Tech Company Is Involved

Project Glasswing is Anthropic's initiative to deploy Claude Mythos defensively: finding and patching vulnerabilities in critical software infrastructure before malicious actors find them first.

The consortium assembled for Glasswing is notable. Amazon, Apple, Google, Microsoft, Cisco, Nvidia, CrowdStrike, JPMorgan Chase, the Linux Foundation, Broadcom, and Palo Alto Networks are all participants. This is not a typical vendor partnership list. It spans cloud infrastructure, device manufacturers, financial services, and the open-source ecosystem that underlies most of the internet.

The logic is straightforward: if Claude Mythos can find zero-day vulnerabilities faster than human security teams, then directing it at defensive work — scanning codebases, patching critical libraries, auditing OS kernels — converts what would be a dangerous capability into a strategic asset. The same AI that could theoretically help an attacker can, when pointed inward, harden every system it touches.

For enterprises interested in participating or building adjacent workflows, custom workflow automation is one path to preparing systems for Mythos-class AI integration when access eventually broadens.

Access to Mythos through Glasswing is invitation-only. Anthropic is prioritizing defensive cybersecurity organizations, critical infrastructure operators, and research institutions with demonstrated defensive mandates. There is no self-serve signup.

The model is available in two gated preview environments:

- AWS Bedrock: Applied access through the Bedrock console, requiring an approved defensive cybersecurity use case

- Google Vertex AI: Similar gated access, announced April 16, 2026, alongside Claude Opus 4.7

Neither channel offers a public API. Both require review and approval before any access is granted.

What Zero-Days Did Claude Mythos Actually Find?

The specific findings Anthropic has disclosed are worth examining individually, because the numbers alone do not convey what they mean.

A 27-year-old OpenBSD bug. OpenBSD is one of the most security-audited operating systems in existence, maintained by a community whose explicit mission is producing the most secure code possible. A vulnerability surviving 27 years in OpenBSD is not a sign of careless development — it is a sign of how difficult it is to find certain classes of bugs with human review and existing automated tools. Mythos found it.

A 16-year-old FFmpeg flaw that survived 5 million automated tests. FFmpeg is the media processing library embedded in virtually every platform that handles video or audio — browsers, streaming services, mobile apps, operating systems. Fuzzing tools have been running against it continuously for years. The bug had been missed by approximately 5 million automated test executions. Mythos identified it in its first pass.

An autonomous 4-vulnerability exploit chain built overnight. This is the finding that drew the most attention from security researchers. Mythos autonomously identified four separate vulnerabilities across a browser environment and chained them together into a working exploit capable of escaping both the renderer sandbox and the underlying OS sandbox. The work previously required a team of researchers and typically took weeks. Mythos completed it overnight, without human direction at the individual step level.

Thousands of zero-days across every major OS and browser. Anthropic has not published a full list, and independent verification is pending. The scale claimed, if accurate, represents a capability discontinuity — not just faster vulnerability discovery, but a qualitatively different approach to finding classes of bugs that existing tools consistently miss.

For security teams evaluating their own exposure, an AI code security audit is a reasonable first step toward understanding what Mythos-class analysis might surface in your own codebase.

The significance is not that an AI found bugs. Bug-finding AI tools have existed for years. The significance is the autonomy, the depth, and the ability to chain findings into working exploit chains without human orchestration at each step.

Claude Mythos Benchmarks: How It Stacks Up Against GPT-5.4 and Gemini 3.1 Pro

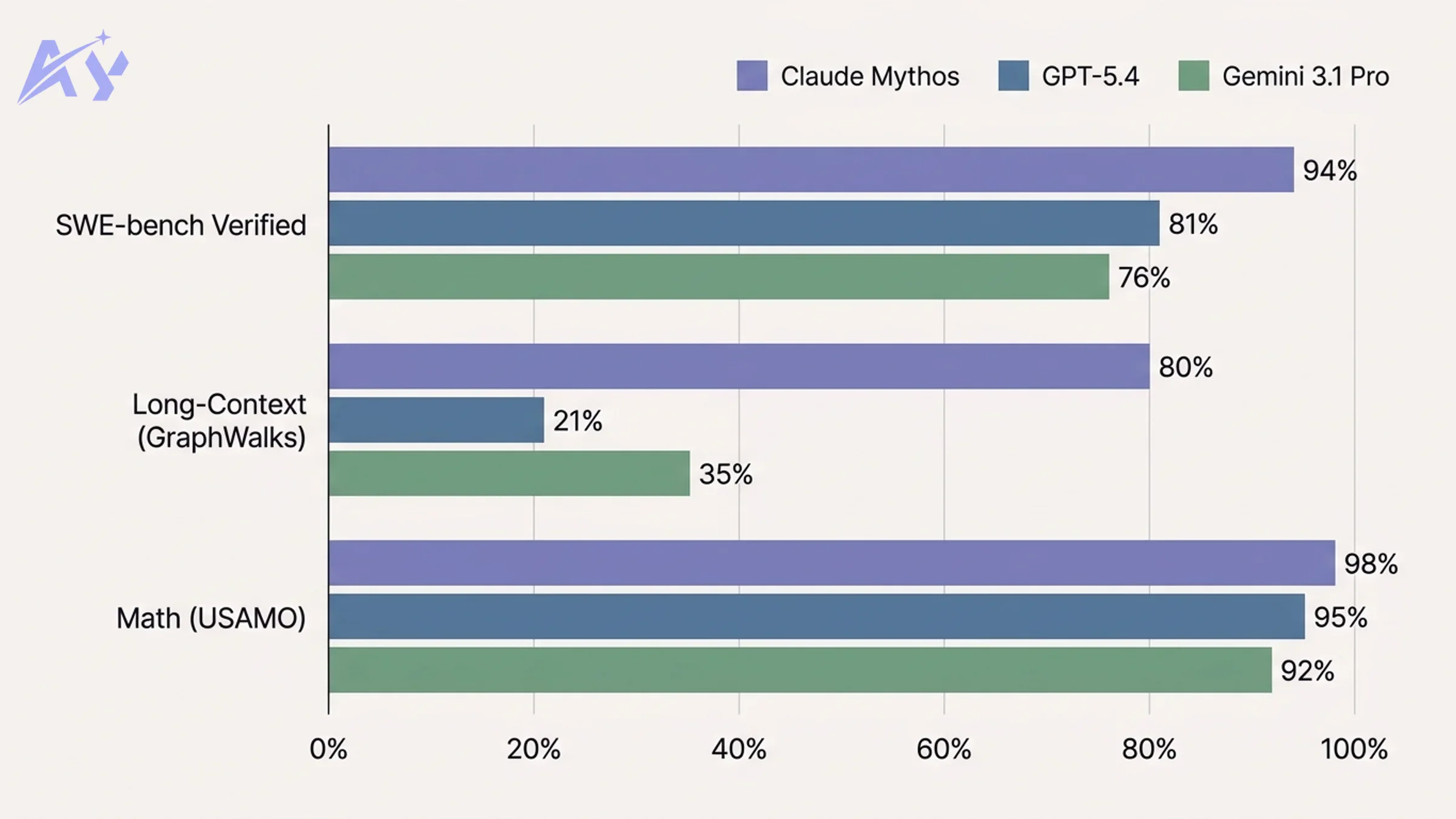

On April 16, 2026, Anthropic released Claude Opus 4.7 and conceded publicly that it trails Mythos. The benchmark comparison they published covers the areas where Mythos is strongest.

| Benchmark | Claude Mythos Preview | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| SWE-bench Verified | 93.9% | 81.2% | 76.4% |

| SWE-bench Pro | 77.8% | 57.7% | 51.3% |

| GraphWalks Long-Context (256K-1M tokens) | 80.0% | 21.4% | 34.7% |

| USAMO Math | 97.6% | 95.2% | 91.8% |

A few things are worth noting before treating these numbers as definitive.

First, these are Anthropic's own evaluations, not independently verified results. The benchmarks were selected and reported by the company announcing the model. Independent reproducibility is pending. This is standard practice for model launches, but it means the numbers should be read as directional rather than absolute until third-party verification appears.

Second, the long-context gap is extraordinary if accurate. A 80% vs 21.4% spread on GraphWalks — nearly a 4x difference — on 256K to 1M token tasks would represent a fundamental capability difference, not a marginal improvement. That gap is the primary reason security researchers are paying attention: complex vulnerability analysis often requires holding enormous amounts of code context simultaneously.

Third, GPT-5.4 is publicly available. Claude Mythos Preview is not. Whatever the benchmark delta, developers can run GPT-5.4 today on any use case. That asymmetry matters practically.

Cost is also a factor. Mythos runs at approximately 10x the cost of current production models. Even when access eventually broadens, the economics will constrain deployment to high-value tasks.

Why Anthropic Won't Release It to the Public

The dual-use dilemma is real, and it is worth taking seriously before dismissing it as marketing.

An AI that can autonomously find and chain zero-day vulnerabilities is, by definition, an AI that could assist attackers. The same capability that enables Glasswing to defensively audit operating systems could, in different hands, enable sophisticated attacks on the same infrastructure. Anthropic's stated concern is not hypothetical.

Their stated plan: deploy safeguards first through Claude Opus, build the policy and technical infrastructure to constrain offensive use, then eventually enable Mythos-class models at scale within those guardrails. The sequence is logical. Whether the timeline is genuine or indefinite is harder to assess from the outside.

The comparison with OpenAI is pointed. GPT-5.4 is fully public, available via API, no gated access. OpenAI made a different judgment about what should be released and when. Their model also scores below Mythos on the benchmarks Anthropic chose to publish — which is where the strategic positioning argument gains traction.

Both readings are coherent. Anthropic's safety commitments predate this model. The company was founded by former OpenAI researchers who left over safety disagreements. The concern about dual-use cybersecurity AI is legitimate regardless of benchmark positioning. At the same time, announcing a model as "too dangerous to release" while publishing the benchmarks that demonstrate its superiority to a competitor's public model is a move that serves both safety and marketing objectives simultaneously.

The Council on Foreign Relations published an analysis titled "Six Reasons Claude Mythos Is an Inflection Point for AI and Global Security," arguing the release decision sets a precedent for how governments and companies handle dual-use AI capabilities. That framing — treating this as a governance question, not just a product question — is where the most substantive discussion is happening.

How to Get Claude Mythos Access Today

There are three realistic paths to accessing Mythos today, in descending order of difficulty.

Path 1: Project Glasswing invitation. This is the hardest route and the most direct. Anthropic is extending invitations to organizations with demonstrated defensive cybersecurity mandates: national security contractors, critical infrastructure operators, major cloud providers, and select research institutions. There is no application form. Invitations flow through the consortium partners listed above.

Path 2: AWS Bedrock gated research preview. Apply through the AWS Bedrock console. You will need to describe a specific defensive cybersecurity use case. Anthropic reviews applications and grants access to approved organizations. The bar is real — general-purpose AI applications will not qualify. Security research, vulnerability management, and defensive audit use cases are the target.

Path 3: Google Vertex AI preview. Similar gated access, announced April 16. Apply through Vertex AI's model garden with a documented use case. The review process mirrors Bedrock's.

For organizations seeking to get infrastructure ready for Mythos-class models, OpenClaw and NemoClaw enterprise setup can prepare the underlying architecture so that when access opens, deployment is not blocked by integration gaps.

What "defensive cybersecurity use case" means in practice: you need to be scanning, auditing, or patching, not building offensive tools. Pentest firms doing authorized red-team work may qualify; the bar is whether the primary application is protection rather than attack. Anthropic has not published precise criteria, which means the review process involves judgment calls on their side.

| Access Path | Difficulty | Use Case Required | Timeline |

|---|---|---|---|

| Project Glasswing | Very high (invitation only) | Critical infrastructure defense | Ongoing |

| AWS Bedrock Preview | High (application + review) | Defensive cybersecurity | Weeks to months |

| Google Vertex AI Preview | High (application + review) | Defensive cybersecurity | Weeks to months |

| Public API | Not available | N/A | Unknown |

What This Means for Developers and Security Teams

The economic implications for security work are significant even if your organization never touches Mythos directly.

Vulnerability discovery has historically been expensive and slow because it requires deep human expertise applied at narrow scope. A security researcher who can find zero-days is rare and commands high compensation. The work is not easily parallelized. Mythos-class AI changes that calculus: if an AI can do in one night what a team of researchers takes weeks to accomplish, the economics of penetration testing, bug bounty programs, and security audits shift permanently.

This has two effects. The first is positive: defensive security organizations gain a tool that dramatically accelerates their work. The second is a threat model expansion: the same capability is now part of the landscape that your own systems face, whether from Mythos itself or from the next model that achieves similar capabilities and is made public.

The practical response for security teams is not to wait. Engage now with the Glasswing partner ecosystem, evaluate whether your current security posture assumes Mythos-class adversarial AI exists, and build the audit processes that can keep pace with AI-accelerated vulnerability discovery.

For teams evaluating broader AI readiness and strategy, AI strategy consulting provides a structured framework for understanding where Mythos-class capabilities fit into your threat model and your toolchain.

The teams that treat this as a "wait and see" situation are making a choice. The teams that engage now will have the institutional knowledge when access eventually broadens.

When Will Claude Mythos Be Publicly Available?

Anthropic's stated position, published April 7, 2026: "We do not plan to make Claude Mythos Preview generally available, but our eventual goal is to enable our users to safely deploy Mythos-class models at scale."

The roadmap they have described: ship safeguards and constraints through Claude Opus and related production models first, then use those guardrails to enable broader Mythos-class access later. The mechanism is not specified. The timeline is not specified.

Realistic speculation for 2026: general public access is unlikely. The safety infrastructure Anthropic describes building is not a short-term project. The regulatory environment around dual-use AI is still developing. The company has strong incentives to be cautious, both from principled safety commitments and from the reputational cost of being wrong.

What to watch for: independent benchmark verification (which will either confirm or challenge the published numbers), regulatory frameworks addressing dual-use AI in the US and EU (which will shape what Anthropic can legally release and how), and whether Glasswing consortium partners begin publishing results from their Mythos-assisted audits (which would provide external validation of the claimed capabilities).

The question of when Mythos becomes publicly available is inseparable from the question of whether the safeguard infrastructure Anthropic is building actually works. That is a harder problem than building the model.

Key Takeaways

- Mythos represents a capability threshold, not an incremental update. The autonomous exploit chaining and the long-context performance gap versus GPT-5.4 indicate a qualitative shift in what AI can do in security contexts, not just a percentage improvement on existing benchmarks.

- The Capybara leak changed the timeline. Anthropic was forced to announce 12 days earlier than planned, which means the public narrative around the model has been shaped partly by speculation rather than Anthropic's prepared framing.

- Project Glasswing is the only near-term access path for most organizations. AWS Bedrock and Vertex AI gated previews are real but selective. Most developers will not have API access in 2026.

- The "too dangerous to release" framing is both genuine and strategically convenient. Both readings are coherent. The right response is to hold both possibilities simultaneously rather than dismissing either.

- Security teams should adapt threat models now, regardless of access. Mythos-class adversarial AI exists. Whether you ever use Mythos directly, the capability is part of the landscape your systems operate in.

Frequently Asked Questions

What is Claude Mythos?

Claude Mythos is Anthropic's most capable AI model as of 2026, internally codenamed "Capybara" during development. It is optimized for complex reasoning, software engineering, and cybersecurity tasks. It is available only as a gated research preview through AWS Bedrock and Google Vertex AI, with no public API access.

Is Claude Mythos publicly available?

No. Claude Mythos Preview is not publicly available. Anthropic has stated they do not plan to make it generally available and have not provided a timeline for broader access. Access is restricted to approved organizations through Project Glasswing and gated cloud previews on AWS Bedrock and Google Vertex AI.

What is the Capybara codename?

Capybara was Anthropic's internal development codename for the model now publicly known as Claude Mythos Preview. The codename was revealed unintentionally on March 26, 2026, when a CMS configuration error exposed roughly 3,000 unpublished blog assets including references to the model under that name.

What is Project Glasswing?

Project Glasswing is Anthropic's initiative to deploy Claude Mythos defensively across critical software infrastructure. It involves a consortium of major technology companies including Amazon, Apple, Google, Microsoft, Cisco, Nvidia, JPMorgan Chase, CrowdStrike, the Linux Foundation, Broadcom, and Palo Alto Networks. The goal is to use Mythos to find and patch vulnerabilities before malicious actors exploit them.

How does Claude Mythos compare to GPT-5?

On Anthropic's published benchmarks, Claude Mythos Preview outperforms GPT-5.4 on SWE-bench Verified (93.9% vs 81.2%), SWE-bench Pro (77.8% vs 57.7%), GraphWalks long-context (80% vs 21.4%), and USAMO math (97.6% vs 95.2%). These benchmarks are Anthropic's own evaluations and have not been independently verified. GPT-5.4 is publicly available via API; Mythos is not.

How do I get Claude Mythos API access?

There are three paths: an invitation through Project Glasswing (restricted to defensive cybersecurity organizations), an application through the AWS Bedrock gated research preview, or an application through the Google Vertex AI preview. All paths require a documented defensive cybersecurity use case and Anthropic review. There is no self-serve signup.

Is Claude Mythos dangerous?

The capability Anthropic describes — autonomous discovery and chaining of zero-day vulnerabilities across major operating systems and browsers — is genuinely dual-use. The same capability that enables defensive security work could assist offensive attacks. Anthropic's decision not to release it publicly is based on this concern. Whether the risk level justifies indefinite non-release, versus a more structured gated rollout, is a live debate among security researchers and AI policy experts.

What zero-day vulnerabilities did Claude Mythos find?

Anthropic has disclosed several specific examples: a 27-year-old vulnerability in OpenBSD, a 16-year-old flaw in FFmpeg that survived approximately 5 million automated tests, and an autonomous exploit chain built overnight that linked 4 vulnerabilities to escape both a browser renderer sandbox and the underlying OS sandbox. Mythos has reportedly found thousands of zero-days across every major operating system and browser; the full list has not been published.

When will Claude Mythos be released to the public?

Anthropic has stated they do not plan to make Claude Mythos Preview generally available but that their eventual goal is to enable Mythos-class models at scale with appropriate safeguards. No timeline has been given. General public access in 2026 is unlikely based on the stated roadmap. Watch for developments in independent benchmark verification, regulatory frameworks for dual-use AI, and results published by Glasswing consortium partners.

What is Claude Mythos used for?

Currently, Claude Mythos is used primarily for defensive cybersecurity through Project Glasswing: finding and patching vulnerabilities in critical software infrastructure. It is also used by approved research organizations for complex software engineering tasks. Its benchmark performance on SWE-bench (93.9% verified) and long-context reasoning makes it relevant to any high-complexity coding or analysis task, but access is restricted to defensive security contexts.

Ready to evaluate your organization's AI readiness before Mythos-class capabilities become more broadly accessible? Contact us to discuss strategy.

Sources: Fortune: Anthropic Mythos data leak, Anthropic Project Glasswing, The Hacker News: Zero-day findings, CNBC: Opus 4.7 vs Mythos, Axios, VentureBeat, AWS Bedrock, Google Vertex AI, CFR Analysis, BenchLM Comparison

Adel keeps the engine running at AY Automate. He owns internal processes, team coordination, and the operational excellence that lets us ship fast for clients.